Introduction

Artificial intelligence is advancing at an unprecedented pace. By 2026, the question Developers ask has evolved beyond a simple “Which AI is best?” It’s now:

Which AI model is most suitable for my precise workflow and task-specific requirements?

Among the most discussed open-source models currently available, two DeepSeek offerings dominate conversations:

- DeepSeek-R1

- DeepSeek-Coder

Both architectures are potent, both are open-weight, and both allow fine-grained customization. However, they are purpose-built for distinctly divergent tasks.

This comprehensive pillar guide, exceeding 3500 words, provides an in-depth, focused comparison across:

- Architectural distinctions

- Benchmark results and task performance

- Programming capabilities

- Logical and algorithmic reasoning

- Security, hallucination potential, and limitations

- Licensing, deployment, and operational cost

- Use-case scenarios and workflow integration

- Developer decision frameworks

By the end, you’ll have a clear understanding of whether DeepSeek-R1 or DeepSeek-Coder aligns with your project requirements in 2026.

Why the DeepSeek-R1 vs DeepSeek-Coder Comparison Matters

Open-source LLMs have transitioned from experimental tools to production-ready systems. engines are now integral to multiple operational layers, including:

- IDE plugins and coding copilots

- Automated enterprise workflows

- SaaS-based solutions

- Research-oriented reasoning systems

- DevOps pipelines and monitoring solutions

Organizations increasingly prefer open models over fully proprietary APIs because they provide:

- Localized deployment and on-prem solutions

- Custom fine-tuning to domain-specific corpora

- Transparent behavior and reproducible outputs

- Infrastructure-level configurability

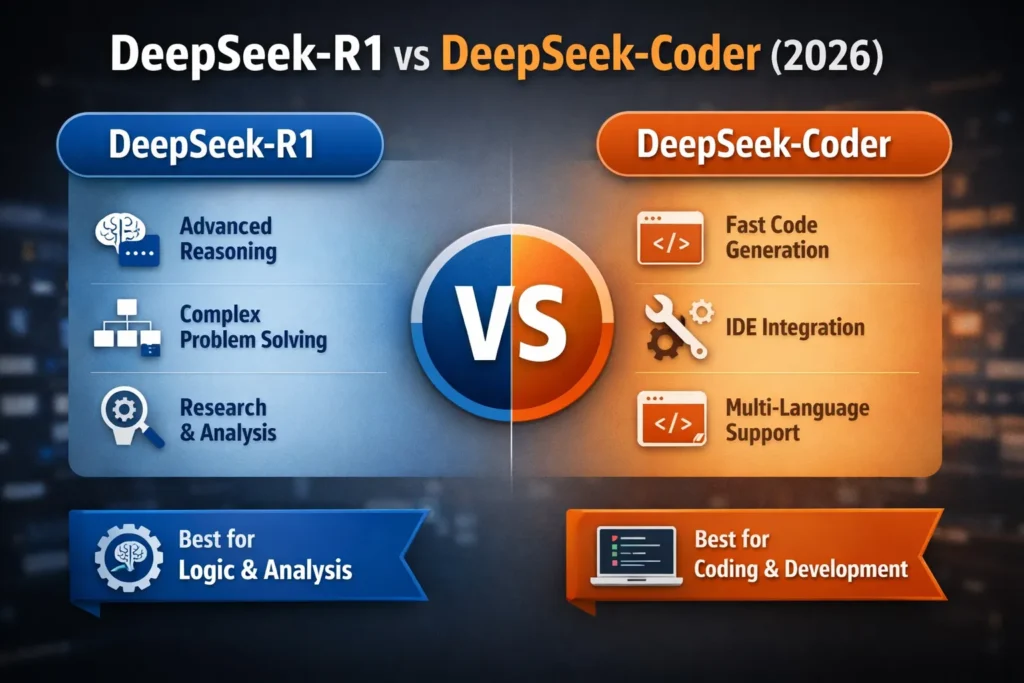

The key distinction lies in their specialization:

| Model | Core Orientation |

| DeepSeek-R1 | Reasoning-centric, analytical engine |

| DeepSeek-Coder | Code-centric, productivity-focused engine |

Selecting the wrong model can lead to:

- Suboptimal inference speed

- Reduced task accuracy

- Increased hallucination probability

- Developer inefficiencies and frustration

Let’s delve into each model’s specific traits.

What Is DeepSeek-R1?

DeepSeek-R1 is a reasoning-optimized large language model (LLM) designed for structured cognition and high-fidelity analytical outputs. Unlike conventional generative models that focus on token-level prediction, DeepSeek-R1 emphasizes hierarchical reasoning, chain-of-thought analysis, and multi-step logical deductions.

Its core competencies include:

- Advanced logical problem-solving

- Stepwise algorithmic reasoning

- Complex system debugging

- Multi-stage analytical workflows

In parlance, DeepSeek-R1 can be considered a cognitive LLM that prioritizes rationality and structural coherence over raw token generation. It’s often described as a “thinking-first” neural system.

Core Capabilities of DeepSeek-R1

DeepSeek-R1 excels in domains that require structured reasoning:

- Analytical mathematics and proofs

- Algorithm dissection and explanation

- Hierarchical problem decomposition

- Deep debugging of complex systems

- Multi-step decision-making pipelines

- Structured analytical support for research workflows

In knowledge representation terms, it’s optimized for symbolic reasoning within a probabilistic language model, making it highly suitable for knowledge-intensive applications.

How DeepSeek-R1 Works

Typical LLMs generate text by estimating the most probable next token given a context window. DeepSeek-R1 extends this with reinforcement learning from reasoning feedback (RLRF) to:

- Decompose complex problems into logically sequenced subtasks

- Evaluate consistency across sequential outputs

- Optimize solution paths with structured reasoning

Applications include:

- Academic and scientific research

- Financial quantitative modeling

- Engineering system validation

- Architecture and algorithm design

- Technical decision support

Trade-offs: Due to its reasoning depth, DeepSeek-R1 may produce longer outputs and have slightly reduced inference speed, but the quality of structured reasoning often outweighs latency concerns for researchers and analysts.

What Is DeepSeek-Coder?

DeepSeek-Coder is the programming-specialist LLM, engineered for developer productivity. Its training corpus emphasizes:

- Public GitHub repositories

- Open-source software documentation

- Technical tutorials and code Examples

- Framework-specific code patterns

DeepSeek-Coder functions as an AI coding assistant, generating syntax-correct and context-aware code efficiently.

Core Capabilities of DeepSeek-Coder

Optimized for software engineering workflows, DeepSeek-Coder supports:

- Multi-language code synthesis

- IDE autocompletion

- Refactoring recommendations

- Snippet scaffolding

- Unit test automation

Supported languages include Python, JavaScript, TypeScript, Java, C++, and Go, among others.

It integrates seamlessly with environments such as:

- Visual Studio Code

- JetBrains IDEs

- Enterprise-specific IDE solutions

How DeepSeek-Coder Works

Instead of reasoning depth, DeepSeek-Coder emphasizes:

- Syntax and semantic pattern recognition

- Rapid token-efficient generation

- Context-aware framework compliance

- Real-time inference for IDE integration

It’s engineered for practical code generation, focusing on developer acceleration and productivity optimization.

DeepSeek-R1 vs DeepSeek-Coder Side-by-Side Comparison

| Feature | DeepSeek-R1 | DeepSeek-Coder |

| Primary Focus | Logical reasoning, structured cognition | Code synthesis, programming productivity |

| Best For | Algorithm analysis, multi-step debugging, and research tasks | Fast code completion, snippet generation, and IDE integration |

| Training Emphasis | Reinforcement learning optimized for reasoning | Code-heavy corpus with pattern extraction |

| Output Style | Stepwise explanatory chains | Direct, executable code |

| Speed | Moderate | High |

| Context Window | Long reasoning chains | ~16K+ tokens coding context |

| Strength | Deep problem decomposition | Rapid snippet and boilerplate generation |

| Weakness | Slower inference, verbose outputs | Limited multi-step reasoning |

| Ideal Users | Researchers, analysts, logic engineers | Software developers, engineers, and IDE power users |

| Licensing | Open-source | Open-source |

Benchmark Performance Analysis (Coding vs Reasoning)

Coding Benchmarks

DeepSeek-Coder demonstrates:

- High success rates in multi-language code completion tasks

- Efficient scaffolding of complex frameworks

- Strong performance in competitive programming prompts

- Pattern recognition for API and library use

DeepSeek-R1 performs well in explanatory coding:

- Algorithm walkthroughs

- Recursive and stack-based logic elucidation

- Multi-step problem decomposition

From a standpoint, Coder is optimized for operational token prediction within structured code Sequences, whereas R1 is optimized for reasoning-rich token chains.

Logic & Reasoning Benchmarks

For tasks demanding formal reasoning:

- Multi-step deduction

- Mathematical derivation

- Logic proof generation

- Algorithmic decision chains

DeepSeek-R1 significantly surpasses DeepSeek-Coder, making it the choice for research labs, fintech modeling, and data-intensive reasoning.

Practical Example Real Developer Scenario

Scenario: Debugging a Complex Recursive Function

- DeepSeek-Coder: Generates corrected syntax quickly, outputs executable code, and saves time in IDEs.

- DeepSeek-R1: Provides a detailed analysis of recursion depth, stack behavior, and logic flaws, suggesting algorithmic optimizations and design improvements.

This illustrates the distinction between speed-oriented generation versus reasoning-centric chain-of-thought modeling.

Real-World Use Cases

When to Use DeepSeek-R1

Opt for DeepSeek-R1 when tasks involve:

- Advanced mathematical derivations

- Risk simulation and modeling

- Complex debugging scenarios

- System architecture analysis

- Academic or research-intensive problem solving

Example: A fintech startup constructing quantitative risk models may utilize DeepSeek-R1 to validate calculation logic, simulate decision pathways, and ensure reasoning consistency.

When to Use DeepSeek-Coder

Opt for DeepSeek-Coder when tasks involve:

- Rapid generation of code snippets

- API scaffold generation

- Framework-specific boilerplate

- Frontend component creation

- Automated unit test generation

Example: A SaaS developer building a dashboard could employ DeepSeek-Coder to generate React components, construct CRUD APIs, automate repetitive tasks, and refactor legacy code efficiently.

Security, Hallucinations & Limitations

Open-source LLMs are not inherently risk-free.

DeepSeek-R1 Limitations

- Potentially over-explanatory outputs

- Moderate response latency

- Possible insecure suggestions if prompted inadequately

- Requires validation for production-critical systems

DeepSeek-Coder Limitations

- Occasionally hallucinates APIs or framework functions

- May generate Logically flawed but syntactically correct code

- Suboptimal for deep multi-step reasoning tasks

Security Best Practices

Both models benefit from:

- Sandboxed deployment

- Human-in-the-loop validation

- Static and dynamic code scanning

- Access control and auditing

Never auto-deploy AI-generated code without thorough review.

Cost, Licensing & Infrastructure

Both DeepSeek-R1 and DeepSeek-Coder are:

- Open-source

- Customizable for domain-specific fine-tuning

- Deployable locally or in cloud environments

Compared to proprietary APIs, open-source LLMs offer lower long-term operational costs, full control, and transparent behavior.

Factors influencing real-world cost include:

- GPU infrastructure and memory requirements

- Cloud hosting or on-prem deployments

- Scaling and high-throughput workloads

- Fine-tuning for domain-specific tasks

Pros & Cons

DeepSeek-R1

Pros:

- Superior logical reasoning and analytical ability

- Detailed debugging insights

- Transparent and interpretable outputs

- Suitable for research-focused applications

Cons:

- Slower inference speed

- Verbose outputs

- Not optimized for IDE efficiency

DeepSeek-Coder

Pros:

- Rapid code generation

- Multi-language and framework support

- Strong integration with IDEs

- Maximizes developer productivity

Cons:

- Limited reasoning depth

- Potential API hallucinations

- Requires careful output Validation

Decision Matrix: Which One Should You Choose?

Key evaluation questions:

Do you need deep reasoning or rapid code generation?

Are you debugging logic-intensive systems?

Is speed more critical than explanation depth?

Will this model run as an IDE-integrated assistant?

Quick Decision Guide:

- Choose DeepSeek-R1 → If your primary need is structured reasoning and multi-step logic analysis.

- Choose DeepSeek-Coder → If your focus is on developer productivity and fast coding workflows.

Many organizations employ both in tandem to maximize output efficiency.

Future Roadmap & Trends

DeepSeek’s evolving architecture includes:

- Distilled lightweight variants for smaller-scale deployments

- Larger context windows for extended reasoning or coding tasks

- Enhanced safety alignment and hallucination mitigation

- Hybrid models combining reasoning depth with coding efficiency

Future engines may integrate R1’s reasoning strength with Coder’s speed, potentially eliminating the gap between reasoning and code-specialized LLMs.

FAQs

A: It depends on task requirements. R1 excels in reasoning-heavy workflows, while Coder is optimized for programming productivity.

A: for many scenarios. Enterprise-grade security validation is still essential.

A: DeepSeek-R1 is generally more effective for logic-intensive debugging.

A: Both are open-weight and fully customizable.

A: Absolutely. Organizations can fine-tune both for domain-specific tasks or coding/analytical workflows.

Conclusion

Choosing between DeepSeek-R1 and DeepSeek-Coder ultimately comes down to your workflow priorities and task-specific needs. Both models are open-source, powerful, and customizable, but they are purpose-built for distinct problem domains:

- DeepSeek-R1 is a reasoning-first model, excelling in multi-step logic, algorithmic reasoning, and structured analytical tasks. Its strength lies in deep problem-solving, stepwise explanations, and reliability in reasoning-intensive workflows, making it ideal for researchers, analysts, and fintech or engineering applications where accuracy and logical consistency are paramount.

- DeepSeek-Coder is a coding-first model, designed to accelerate developer productivity through rapid, syntax-correct code generation, IDE integration, and multi-language support. Its Advantages include speed, practical coding efficiency, and framework familiarity, making it perfect for developers building SaaS platforms, web apps, APIs, or automating repetitive code tasks.