Introduction

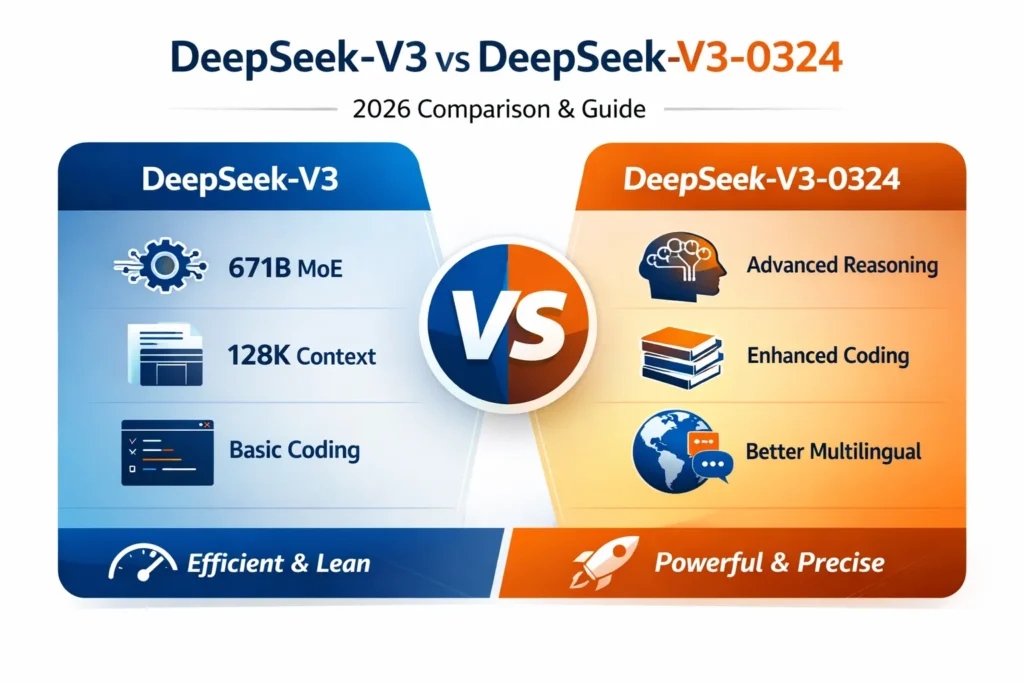

In the rapidly evolving landscape of large language models (LLMs), DeepSeek has emerged as a prominent choice among Researchers, developers, and enterprise AI teams. The DeepSeek‑V3 iteration has become particularly noteworthy due to its long-context comprehension, robust code generation capabilities, and multilingual proficiencies.

However, the AI ecosystem evolves at a lightning pace. In March 2025, the DeepSeek‑V3‑0324 update was released, introducing a host of optimizations that refined reasoning, coding, and multi-language processing. Understanding the distinctions between these two models is pivotal for selecting the appropriate tool for workflows, multi-step reasoning, coding automation, or complex document processing tasks.

This comprehensive guide will delve into:

- The technical differences between V3 and V3‑0324

- Benchmarks for coding, reasoning, and performance

- Function calling enhancements and multilingual improvements

- Practical real-world use cases and deployment strategies

- Pros, cons, and model selection recommendations

By the conclusion of this article, you will have a crystal-clear understanding of which DeepSeek variant aligns with your specific requirements, as well as how DeepSeek continues to influence the open-source AI ecosystem.

What Is DeepSeek-V3?

DeepSeek‑V3 is an open-source, transformer-based LLM engineered for high-performance. It has been widely adopted for tasks requiring long-context comprehension, automated code generation, and multilingual processing. Its open-source nature provides a significant advantage over proprietary alternatives, allowing both academic researchers and commercial developers to deploy it without licensing constraints.

Core Capabilities of DeepSeek‑V3

- Long-context understanding: Handles extended sequences of text or code with minimal degradation in contextual relevance.

- Code execution and generation: Capable of producing functional code snippets in multiple programming languages.

- Multilingual support: Strong performance in English, Chinese, and other languages, useful for cross-linguistic communication.

- Open-source flexibility: MIT license ensures free use in both commercial and academic scenarios.

Key Features

Sparse Mixture-of-Experts (MoE) Architecture

DeepSeek‑V3 employs a Sparse Mixture-of-Experts (MoE) design with 671 billion effective parameters, activating only a subset of experts per token. This leads to efficient computation while retaining high model capacity, making it ideal for resource-intensive tasks.

Ultra-Long Context Window

With a 128K token context window, DeepSeek‑V3 can manage extensive documents, codebases, or research datasets, ensuring semantic continuity across extremely long inputs.

Multilingual Proficiency

The model exhibits strong cross-lingual capabilities, enabling robust performance in tasks ranging from summarization to machine translation in multiple languages.

Open-Source Licensing

DeepSeek‑V3 is distributed under an MIT license, granting complete freedom for commercial integration, research, and derivative development.

Areas Where DeepSeek‑V3 Excels

- General-purpose text generation: Produces coherent, Natural language output for a variety of domains.

- Long-context summarization: Summarizes extensive text, retaining critical semantic information.

- Multilingual tasks: Supports language-specific tasks, including translation, sentiment analysis, and question answering.

- Basic reasoning tasks: Performs straightforward logical and mathematical operations effectively.

Limitations of DeepSeek‑V3

Despite its strengths, users have reported certain shortcomings:

- Reasoning capabilities are weaker than later versions, especially in multi-step logic tasks.

- Coding struggles with multi-file or modular projects, often requiring manual integration.

- Function calling is rudimentary, limiting automated API or structured output tasks.

These limitations set the stage for the enhanced DeepSeek‑V3‑0324 version.

DeepSeek-V3-0324: Updates, Enhancements, and Improvements

Released in March 2025, DeepSeek‑V3‑0324 builds on the foundation of V3 while introducing a variety of enhancements targeted at reasoning, coding, multilingual, and function execution.

What’s New in DeepSeek‑V3‑0324?

Enhanced Reasoning

DeepSeek‑V3‑0324 exhibits improved multi-step reasoning, with stronger logical coherence and chain-of-thought capabilities, making it better suited for complex pipelines.

Coding & Developer Tooling

The update improves multi-file code generation, unit test creation, and integration with development frameworks, leading to more production-ready outputs.

Language & Search Enhancements

- Improved Chinese language generation

- Enhanced information retrieval and knowledge search tasks

- Support for structured outputs in multi-language contexts

Function Calling Improvements

V3‑0324 provides more accurate structured outputs, suitable for APIs, chat Assistants, and applications requiring precise data handling.

Architecture Consistency

While retaining the 671B MoE parameters and 128K token context window, V3‑0324 is optimized for real-world performance, balancing efficiency and accuracy.

Side-by-Side Technical Comparison: DeepSeek‑V3 vs DeepSeek-V3-0324

| Feature | DeepSeek‑V3 | DeepSeek‑V3‑0324 |

| Release Date | Dec 2024 | Mar 2025 |

| Architecture | MoE 671B | MoE 671B |

| Ultra Context Window | 128K | 128K |

| Reasoning Quality | Good | Enhanced |

| Coding Performance | Standard | Improved |

| Multi-Language Strength | Strong | Upgraded |

| Function Calling Accuracy | Basic | Higher |

| Token Economy | Efficient | Slightly more verbose |

Key Insight: V3‑0324 retains the MoE architecture but elevates reasoning, coding, and multilingual capabilities, delivering superior performance across extended contexts.

DeepSeek Benchmarks 2025 Coding, Reasoning

Coding Benchmarks

- Fewer syntax and logic errors

- Enhanced modularity for multi-file projects

- Outperforms proprietary competitors like Claude 3.7 Sonnet in Python, TypeScript, and React

Reasoning Benchmarks

- Stronger logic retention for multi-step math and logic problems

- Improved context handling for long-form reasoning tasks

- V3‑0324 surpasses V3 in advanced reasoning benchmarks

Verbosity Differences

- V3‑0324 produces approximately 31.8% more tokens in explanation-heavy scenarios

- Trade-off: Higher token usage versus richer, more Detailed outputs

DeepSeek vs Proprietary Models: Perspective

| Model | Strength | Weakness | Cost & Accessibility |

| GPT‑4.5 | Superior reasoning & text generation | Closed-source, expensive | Paid API only |

| Claude Series | Safe, structured outputs | Limited customization | Paid API |

| DeepSeek‑V3‑0324 | Open-source, strong coding, reasoning, multi-language | Slightly verbose | Free MIT license |

Takeaway: Open-source DeepSeek‑V3‑0324 is competitive with proprietary models for coding, reasoning, and tasks, while remaining freely accessible.

Practical Use Cases: Choosing the Right Version

Use DeepSeek‑V3 if:

- You require lean, cost-efficient outputs

- Handling high-volume token-sensitive tasks

- Simple reasoning and coding workflows

Use DeepSeek‑V3‑0324 if:

- Undertaking complex reasoning or multi-step logic tasks

- Advanced coding or multi-module projects

- High-function-calling accuracy and enhanced multilingual tasks

Many organizations adopt a dual-deployment strategy: V3 for lightweight tasks, V3‑0324 for deep reasoning and complex code automation.

Pros & Cons

DeepSeek‑V3

Pros:

- Open-source, MIT-licensed

- Efficient token usage

- Strong multilingual support

- General-purpose applications

Cons:

- Limited reasoning capabilities

- Basic function calling

- Struggles with complex coding scenarios

DeepSeek‑V3‑0324

Pros:

- Enhanced reasoning and coding capabilities

- Better handling of Chinese & multilingual outputs

- Higher function-calling precision

- Superior benchmark performance

Cons:

- Slightly more verbose outputs

- Occasional repetition in explanations

- Minor hallucination risk remains

DeepSeek Context Window & Architecture in Terms

Sparse Mixture-of-Experts (MoE)

- Selective expert activation → Efficient model scaling

- Expands effective parameter space → Improved contextual Understanding

128K Token Context Window

- Ideal for book-length summarization or long research papers

- Supports legal, academic, or technical documents

- Optimizes codebase analysis and advanced retrieval tasks

Applications leveraging the full context window experience significant improvements in reasoning and comprehension.

DeepSeek V3 Coding Performance Detailed Analysis

Logical Accuracy

- Cleaner logic for algorithms, data structures, and system design

- Reduces post-generation corrections

Testing Integration

- Accurate unit test generation

- Supports interface definitions and testing pipelines

Team Collaboration

- Modularized outputs align with human coding standards

- Reduces review cycles in automated workflows

Real-World Examples

- Multi-File Project Generation:

- V3: Snippets may need manual integration

- V3‑0324: Generates connected modules ready for deployment

- Multilingual Summarization:

- V3: Chinese outputs may have inconsistencies

- V3‑0324: Accurate, fluent, contextually correct outputs

- API Function Calling:

- V3: Basic JSON output

- V3‑0324: Precise, structured responses compatible with assistant APIs

FAQs

A: Same architecture, but V3‑0324 delivers superior reasoning, coding, multilingual processing, and function calling accuracy.

A: Absolutely, especially for complex reasoning, advanced coding, or long-form generation.

A: Both are MIT-licensed, free for commercial and academic use.

A: They are competitive in long-context, reasoning, and coding tasks, with the advantage of open-source freedom.

A: V3 is more token-efficient, whereas V3‑0324 prioritizes accuracy and depth over token economy.

Conclusion

In the DeepSeek‑V3 vs V3‑0324 comparison:

- DeepSeek‑V3: Efficient, cost-effective, strong for general-purpose and lightweight coding.

- DeepSeek‑V3‑0324: Superior reasoning, code Generation, and long-context performance.

Recommendation:

- Use V3 for routine, high-volume tasks

- Use V3‑0324 for complex reasoning, multi-step logic, advanced coding, and multilingual workflows.

Next Steps for Developers

- Download official model cards and check updates

- Benchmark V3‑0324 on real codebases

- Optimize token usage vs output depth

- Evaluate function-calling performance in practical applications

Together, these models position DeepSeek as a leading open-source AI ecosystem, bridging the gap between academic research and commercial AI deployment.