Introduction

Artificial intelligence is advancing at an unprecedented pace. Foundation models are iterating rapidly, architectures are becoming more Efficient, and inference optimization techniques are reshaping deployment economics. Yet, sometimes the most complex decision is not choosing between two different AI companies — it’s choosing between two sophisticated models developed by the same research lab.

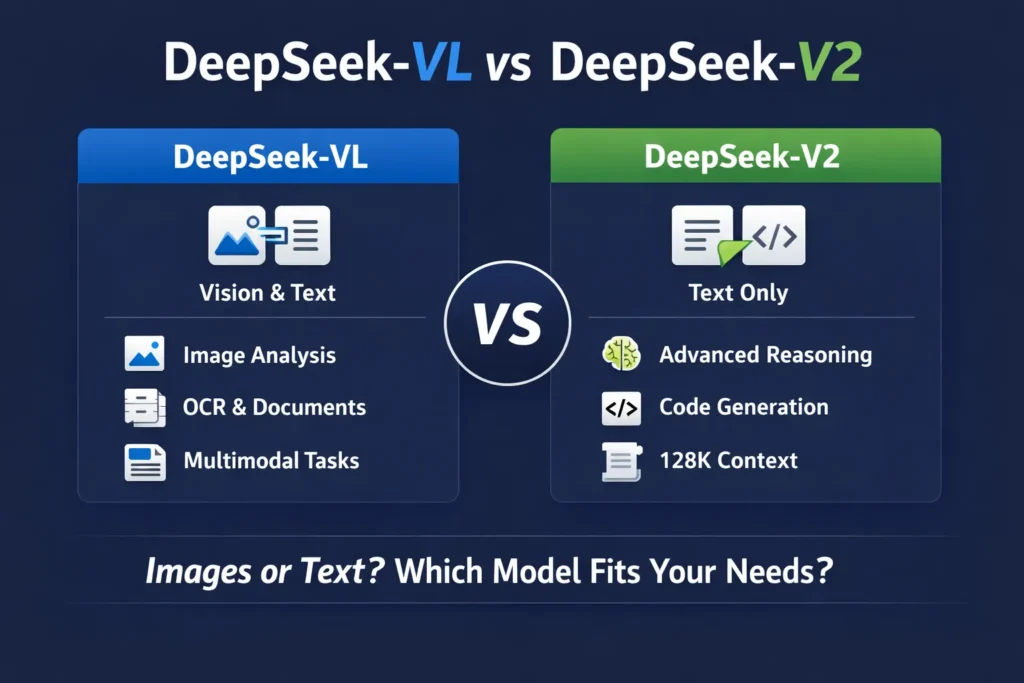

That is precisely the case with DeepSeek and its two flagship systems:

- DeepSeek-VL

- DeepSeek-V2

Both integrate advanced attention optimization like Multi-Head Latent Attention (MLA).

However, they are engineered for fundamentally different problem spaces.

This 2026 definitive guide will provide a deep, NLP-focused, architecture-level breakdown of DeepSeek-VL vs DeepSeek-V2, covering:

- Model objectives and design philosophy

- Multimodal representation learning vs pure text modeling

- Mixture-of-Experts (MoE) explained

- Multi-Head Latent Attention (MLA) memory optimization

- Dynamic tiling in vision encoders

- Context window scaling

- Benchmark tendencies

- Practical enterprise deployment use cases

- Comparative pros and cons

- Final strategic recommendation

If you are a developer, startup founder, machine learning engineer, AI architect, or enterprise decision-maker, this guide will help you align the right DeepSeek model with your computational workflow.

Understanding the DeepSeek Ecosystem

Before conducting a head-to-head evaluation of DeepSeek-VL vs DeepSeek-V2, it is essential to understand the broader research philosophy behind DeepSeek.

Unlike some AI labs that pursue monolithic “one-model-for-all” dense transformer scaling, DeepSeek emphasizes:

- Sparse activation efficiency

- Cost-optimized training

- Long-context processing

- Modular architecture

- Inference scalability

- Practical deployment economics

Instead of activating every parameter for every token (as dense models do), DeepSeek’s MoE-based systems selectively activate only relevant subnetworks. This significantly reduces active parameter load per inference step while preserving large total parameter capacity.

In short:

- DeepSeek-VL is purpose-built for multimodal cognition.

- DeepSeek-V2 is engineered for advanced natural language reasoning and structured generation.

They are not direct competitors.

They are specialized instruments.

But depending on your application domain, one will clearly outperform the other.

What Is DeepSeek-VL?

DeepSeek-VL Explained in Terms

DeepSeek-VL is a Vision-Language Model (VLM). In NLP research terminology, this means it performs multimodal representation alignment between:

- Visual embeddings (pixel-derived features)

- Textual token embeddings (subword or token-level representations)

Unlike pure Large Language Models (LLMs), which operate exclusively on textual sequences, DeepSeek-VL integrates a vision encoder with a transformer-based language decoder. The system learns a shared embedding space in which visual semantics and linguistic semantics are co-embedded.

In simpler terms:

It can reason across both modalities simultaneously.

For example:

You upload a financial chart and ask:

“What trend does this chart demonstrate over the last quarter?”

DeepSeek-VL processes pixel-level features, extracts structured representations, and generates a natural language explanation grounded in visual context.

That is multimodal reasoning.

What Does “Vision-Language” Actually Mean?

In NLP architecture, a vision-language model learns cross-modal alignment between:

- Spatial feature maps (CNN or ViT-derived)

- Transformer token sequences

- Attention-based cross-modal fusion layers

This enables tasks such as:

- Visual Question Answering (VQA)

- Image caption generation

- Scene description

- OCR-style document interpretation

- Chart comprehension

- Infographic explanation

- Multimodal retrieval

This is where DeepSeek-VL vs DeepSeek-V2 diverges dramatically:

DeepSeek-V2 cannot process images at all. It is text-only.

Core Capabilities of DeepSeek-VL

Let’s examine the functional strengths of the system.

Visual Question Answering (VQA)

VQA requires joint reasoning over:

- Visual region embeddings

- Natural language queries

- Contextual alignment mechanisms

DeepSeek-VL can:

- Encode image patches.

- Map them into latent feature space.

- Attend to relevant regions.

- Generate semantically coherent responses.

Example:

Upload a medical X-ray.

Ask: “Is there evidence of a fracture?”

The model analyzes shape irregularities and returns a linguistic inference.

OCR-Like Document Intelligence

DeepSeek-VL supports structured document understanding.

This includes:

- Invoice parsing

- Receipt extraction

- Contract summarization

- Form digitization

- Table extraction

It combines visual parsing with text generation, making it ideal for enterprise automation workflows in:

- Legal technology

- Insurance processing

- Accounting automation

- Compliance review

Chart and Graph Interpretation

Chart reasoning requires:

- Axis detection

- Label extraction

- Trend analysis

- Quantitative inference

DeepSeek-VL demonstrates strong multimodal grounding in:

- Line charts

- Bar graphs

- Pie charts

- Time-series visualizations

This is particularly useful in business analytics dashboards and investor reporting systems.

Multimodal Reasoning

This is its strongest differentiator.

DeepSeek-VL can integrate:

- An image

- A text prompt

- External contextual instructions

For example:

A product image + customer review + question.

The model reasons across all three input channels.

This cross-modal inference capability makes it highly valuable in real-world AI systems.

DeepSeek-VL2 — Architectural Evolution

DeepSeek enhanced its original multimodal model into a more optimized system commonly referred to as VL2.

This upgrade introduces major architectural innovations.

Dynamic Tiling Vision Encoding

High-resolution images consume substantial GPU memory due to large spatial dimensions.

Dynamic tiling addresses this by:

- Segmenting images into adaptive regions

- Allocating attention only to salient areas

- Reducing redundant spatial computation

Benefits:

- Faster inference latency

- Higher detail preservation

- Reduced memory footprint

- Lower GPU cost

Dynamic tiling enhances scalability for enterprise-grade document processing systems.

Mixture-of-Experts (MoE) Backbone

VL2 integrates a sparse MoE transformer backbone.

MoE architecture includes:

- Multiple expert subnetworks

- A gating mechanism

- Selective expert activation

Instead of activating all parameters, the model routes tokens to the most relevant experts.

Advantages:

- Increased model capacity

- Reduced active parameter count

- Improved efficiency-per-token

- Lower inference cost

This is a major reason why DeepSeek-VL is competitive in production environments.

Multi-Head Latent Attention (MLA)

MLA is one of DeepSeek’s most impactful innovations.

Traditional attention mechanisms scale memory quadratically with sequence length.

MLA introduces:

- Latent compression of key-value pairs

- Reduced memory overhead

- More scalable long-context inference

In practical terms:

- Lower VRAM usage

- Faster decoding

- More efficient long multimodal sessions

For multimodal AI workloads, this is highly advantageous.

What Is DeepSeek-V2?

Now let’s analyze the second contender.

DeepSeek-V2 is a pure Large Language Model (LLM).

It does not accept visual input.

It does not process image embeddings.

Instead, it focuses entirely on:

- Text comprehension

- Logical reasoning

- Code synthesis

- Mathematical inference

- Long-context dialogue

- Structured generation

For text-heavy applications, V2 is a powerful architecture.

DeepSeek-V2 Architecture Explained

Mixture-of-Experts (MoE)

DeepSeek-V2 uses sparse MoE scaling.

Dense transformer:

- Activates all Parameters per token.

MoE transformer:

- Contains multiple experts.

- Uses a router to select a subset.

- Activates only relevant subnetworks.

Result:

- Massive total parameter count.

- Smaller active parameter footprint.

- Higher efficiency-to-performance ratio.

This allows DeepSeek-V2 to achieve strong reasoning benchmarks while maintaining cost efficiency.

128K Context Window

One of DeepSeek-V2’s defining strengths is its extended context window — up to 128,000 tokens.

This enables:

- Full legal document analysis

- Large research paper ingestion

- Multi-file codebase reasoning

- Extended conversational memory

- Complex multi-step reasoning chains

In NLP terms, longer context increases:

- Cross-document coherence

- Discourse continuity

- Global dependency modeling

DeepSeek-VL does not match this for pure text scalability.

Multi-Head Latent Attention (MLA)

DeepSeek-V2 also integrates MLA.

This yields:

- Reduced key-value cache memory

- Faster inference speed

- Lower computational overhead

- Improved token throughput

For startups and enterprises concerned with API cost, this matters significantly.

DeepSeek-VL vs DeepSeek-V2 — Head-to-Head Comparison

| Feature | DeepSeek-VL / VL2 | DeepSeek-V2 |

| Primary Modality | Vision + Text | Text Only |

| Architecture | Vision Encoder + MoE LLM | Sparse MoE Transformer |

| Image Understanding | ✅ Yes | ❌ No |

| Long Context | Moderate | Up to 128K tokens |

| Coding Ability | Limited | Strong |

| OCR Capability | Yes | No |

| Memory Efficiency | High (MLA) | Very High (MLA + MoE) |

| Best For | Multimodal AI | Text reasoning & coding |

This table highlights the structural divergence in DeepSeek-VL vs DeepSeek-V2.

Real-World Use Case Comparison

Scenario 1: Financial Chart Analysis Platform

Requirement:

- Interpret graphs

- Extract trends

- Provide textual summaries

Winner: DeepSeek-VL

Because V2 cannot process images.

Scenario 2: AI Coding Assistant

Requirement:

- Debugging

- Refactoring

- Code completion

- Multi-file analysis

Winner: DeepSeek-V2

128K context window enables repository-level reasoning.

Scenario 3: 200-Page Legal Document Summarization

Winner: DeepSeek-V2

Extended context modeling supports large-scale document ingestion.

Scenario 4: Invoice & Receipt Automation

Winner: DeepSeek-VL2

Multimodal extraction gives it the advantage.

Benchmarks & Performance Trends

While benchmark scores vary by dataset, general trends indicate:

DeepSeek-V2 excels in:

- Logical reasoning evaluations

- Mathematical Problem Solving

- Coding benchmarks

- Textual comprehension

DeepSeek-VL2 excels in:

- Visual Question Answering

- Chart reasoning

- Document parsing

- Multimodal grounding

In simplified terms:

If the task is text-dominant → V2 performs better.

If the task involves imagery → VL2 dominates.

Pros & Cons

Pros

- Integrated image-text processing

- Strong multimodal reasoning

- Effective document OCR capability

- Efficient sparse architecture

- Improved scalability in VL2

Cons

- Not optimized for advanced coding

- Shorter pure-text context window

- Less ideal for large-scale textual analytics

Pros & Cons

Pros

- Excellent analytical reasoning

- Long 128K context window

- Robust code generation

- Efficient sparse activation

- Cost-effective inference scaling

Cons

- Cannot interpret images

- Not suitable for multimodal pipelines

- Requires prompt structuring for optimal results

Vision-Language vs Pure Language Models: Strategic Perspective

The debate between DeepSeek-VL vs DeepSeek-V2 represents a broader AI industry question:

Multimodal intelligence vs pure language intelligence.

Multimodal models are superior for:

- Human-like perception

- Visual automation

- Enterprise document digitization

- Real-world sensory integration

Pure language models excel in:

- Logical deduction

- Algorithmic reasoning

- Programming assistance

- Long-form Generation

- Knowledge synthesis

The future likely involves hybrid integration of both.

But in 2026, your application domain determines the optimal choice.

Which DeepSeek Model Should You Choose in 2026?

There is no universal champion.

Choose DeepSeek-VL / VL2 if:

- Your application requires visual understanding

- You process invoices, forms, or receipts

- You build multimodal assistants

- You analyze charts or dashboards

Choose DeepSeek-V2 if:

- You need deep textual reasoning

- You analyze long documents

- You develop AI coding tools

- You require cost-efficient, large-scale NLP processing

The superior model is the one aligned with your computational objectives.

Final Verdict: Who Wins?

In the comparison of DeepSeek-VL vs DeepSeek-V2:

- VL2 wins for multimodal AI systems.

- V2 wins for text-centric reasoning and code intelligence.

They serve different Missions within the AI ecosystem.

If you are building:

- AI SaaS platform → likely V2

- Document automation product → VL2

- Research agent → V2

- Visual analytics engine → VL2

Your product architecture decides the winner.

FAQs

A: Not necessarily. V2 is more powerful for text reasoning, while VL is stronger for multimodal tasks.

A: It is a text-only large language model.

A: VL2 introduces dynamic tiling, MoE backbone, and Multi-Head Latent Attention for better efficiency and performance.

A: If you’re building chatbots or coding tools → V2.

If you’re building a document or visual AI → VL2.

Conclusion

When comparing DeepSeek-VL and DeepSeek-V2, the most important realization is this:

They are not rivals.

They are specialized systems engineered for different computational domains.

Throughout this guide, we analyzed their architectures, NLP foundations, sparse MoE routing mechanisms, attention optimizations, multimodal alignment capabilities, and real-world deployment scenarios. The evidence clearly shows that the “winner” depends entirely on modality alignment and workload structure.

If Your Work Is Vision-Centric → DeepSeek-VL Wins

Choose DeepSeek-VL (especially VL2) if your system requires:

- Image understanding

- Document OCR-style automation

- Invoice and receipt Extraction

- Chart and graph interpretation

- Multimodal reasoning across image + text

Its dynamic tiling encoder, MoE backbone, and Multi-Head Latent Attention architecture make it efficient for production-scale multimodal AI systems.