Introduction

In the rapidly evolving ecosystem of large language models (LLMs), the year 2026 represents a critical inflection point where artificial intelligence systems are no longer evaluated purely on their generative fluency, but rather on semantic efficiency, contextual depth, computational optimization, and domain-specific intelligence.

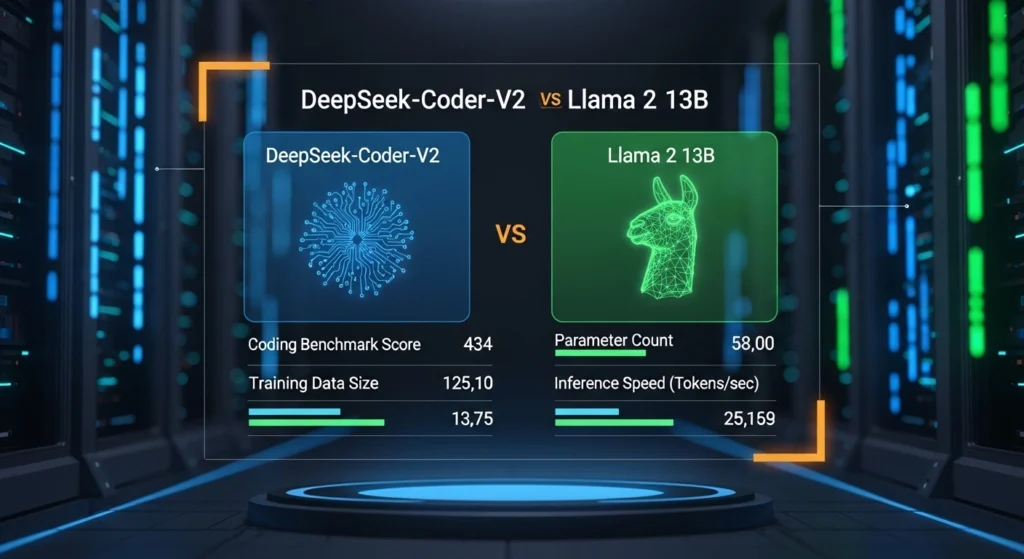

Within this paradigm shift, the comparison between DeepSeek-Coder-V2 and Llama 2 13B becomes particularly significant for developers, data scientists, and AI engineers aiming to build production-level applications.

Modern software engineering workflows now depend heavily on transformer-based neural architectures, and selecting the right model directly influences:

- Code synthesis accuracy

- Token-level inference efficiency

- Contextual memory retention

- API cost scaling

- Multi-file reasoning capability

- Repository-level comprehension

While Llama 2 13B is widely recognized as a foundational dense transformer model with broad natural language capabilities, DeepSeek-Coder-V2 introduces a more advanced paradigm based on Mixture-of-Experts (MoE) architecture, optimized specifically for programming-centric tasks and large-scale code intelligence.

For European AI startups, SaaS companies, and independent developers, this decision is not merely technical—it is strategic. It determines infrastructure cost, latency performance, and long-term scalability of AI-powered systems.

This article delivers a deep semantic analysis, benchmarking interpretation, architectural decomposition, and real-world applicability comparison to help you choose the optimal model for modern AI development pipelines.

What is DeepSeek-Coder-V2?

DeepSeek-Coder-V2 is a specialized code intelligence transformer model designed to maximize performance in programming-related natural language processing tasks. Unlike general-purpose LLMs, it is engineered with task-specific inductive bias toward code semantics, syntax modeling, and structural reasoning across programming languages.

Core Characteristics

- Supports 300+ programming and scripting languages

- Utilizes a 128K token extended contextual embedding window

- Built on a Mixture-of-Experts sparse activation architecture

- Optimized for:

- Code generation (syntactic + semantic correctness)

- Automated debugging pipelines

- Multi-file dependency resolution

- Repository-scale reasoning

- Context-aware refactoring suggestions

Significance

DeepSeek-Coder-V2 leverages contextual token sparsity and expert routing mechanisms, enabling the model to activate only relevant neural sub-networks per task. This reduces computational overhead while maintaining high representational fidelity in code generation tasks.

This makes it particularly effective for:

- Large-scale software repositories

- Microservice architecture understanding

- Multi-language interoperability systems

- DevOps automation pipelines

What is Llama 2 13B?

Llama 2 13B is a dense transformer-based foundational language model developed for general-purpose natural language understanding and generation tasks. It belongs to the second generation of Meta’s open-source LLM ecosystem.

Core Characteristics

- Contains 13 billion dense parameters

- Fixed ~4K token context window

- Fully activated neural network per inference cycle

- Trained on large-scale multilingual corpora

- Strong generalization across NLP tasks

Functional Strengths

Llama 2 13B performs exceptionally well in:

- Conversational AI systems

- Content generation pipelines

- Lightweight local inference environments

- Research prototyping

- Instruction-following NLP tasks

Limitation in Coding Domain

Despite its versatility, Llama 2 13B lacks:

- Deep code-specific optimization layers

- Extended contextual memory for large repositories

- Advanced debugging reasoning chains

- Fine-grained syntax-aware generation modules

Architecture Comparison: MoE vs Dense Transformers

Understanding architectural design is crucial for evaluating model efficiency and computational behavior.

DeepSeek-Coder-V2

DeepSeek-Coder-V2 is built on a sparse activation neural architecture, where only a subset of expert networks is activated during inference.

Implications:

- Reduces redundant token processing

- Improves inference efficiency

- Enhances scalability across distributed systems

- Optimizes memory bandwidth utilization

Key Advantage:

Instead of processing all parameters Simultaneously, it selectively routes input tokens through specialized expert modules.

Llama 2 13B

Llama 2 operates on a fully dense activation architecture, meaning every parameter contributes to every inference step.

Implications:

- Higher computational cost per token

- Stable but less scalable performance

- Predictable but rigid inference patterns

Architecture Comparison Table

| Feature | DeepSeek-Coder-V2 | Llama 2 13B |

| Model Type | MoE Sparse Transformer | Dense Transformer |

| Compute Efficiency | High | Medium |

| Scalability | Excellent | Limited |

| Token Efficiency | Optimized | Uniform |

| Coding Specialization | Advanced | General |

Verdict: DeepSeek-Coder-V2 demonstrates superior architectural efficiency for code-centric workloads.

Coding Benchmarks & NLP Performance Evaluation

Benchmarking provides empirical validation of model capability in structured reasoning tasks.

DeepSeek-Coder-V2 Performance Profile

DeepSeek demonstrates strong performance across:

- HumanEval (code synthesis accuracy)

- MBPP (basic programming problem solving)

- GSM8K (logical reasoning chains)

Interpretation:

The model excels in:

- Code token prediction probability distribution

- Syntax-semantic alignment

- Multi-step logical decomposition

Llama 2 13B Performance Profile

Llama 2 13B, while strong in general NLP tasks, exhibits:

- Lower performance in algorithmic reasoning tasks

- Reduced accuracy in multi-step code generation

- Limited debugging inference depth

Benchmark Summary Table

| Benchmark | DeepSeek-Coder-V2 | Llama 2 13B |

| HumanEval | High accuracy | Low-medium |

| MBPP | Strong | Moderate |

| Code Reasoning | Advanced | Basic |

| Debugging | Excellent | Weak |

Conclusion: DeepSeek-Coder-V2 is architecturally superior in coding benchmarks.

Context Window & Long-Form Memory Analysis

How much semantic information a model can retain.

Context Comparison

| Model | Context Length |

| DeepSeek-Coder-V2 | 128K tokens |

| Llama 2 13B | ~4K tokens |

Implications

DeepSeek enables:

- Full repository ingestion

- Multi-file dependency mapping

- Long-context reasoning chains

- Persistent memory simulation

Llama 2 is restricted to short-form interactions and limited document scope.

Cost Efficiency & Economic Scalability

DeepSeek-Coder-V2

- Designed for low-cost inference scaling

- Optimized token-to-performance ratio

- Suitable for SaaS APIs and enterprise workloads

Llama 2 13B

- Free open-source deployment

- High infrastructure cost at scale

- GPU-intensive runtime behavior

Cost Comparison Table

| Factor | DeepSeek | Llama 2 |

| API Cost | Low | N/A |

| Scaling Cost | Efficient | High |

| Infrastructure | Optimized | Heavy |

Economic Verdict: DeepSeek is more cost-efficient for production environments.

Latency & Inference Speed Analysis

DeepSeek-Coder-V2

- Sparse activation reduces computational overhead

- Faster token generation per second

- Optimized caching strategies

Llama 2 13B

- Full parameter activation increases latency

- Slower throughput under heavy workloads

DeepSeek achieves superior latency optimization and throughput scalability.

Real-World Developer Applications

DeepSeek-Coder-V2 Ideal Use Cases:

- AI-powered coding assistants

- SaaS backend automation

- DevOps orchestration systems

- Multi-file code analysis engines

Llama 2 13B Ideal Use Cases:

- Offline AI applications

- Lightweight chatbots

- Research prototyping

- Privacy-sensitive deployments

Europe-Focused AI Deployment Context

In regions like:

- Germany

- France

- United Kingdom

- Netherlands

- Switzerland

Developers must consider:

- GDPR compliance

- Data sovereignty

- Local inference vs cloud API usage

Insight:

- DeepSeek → best for scalable enterprise AI systems

- Llama 2 → preferred for local, privacy-first deployments

Pros & Cons

DeepSeek-Coder-V2

Advantages:

- High semantic coding accuracy

- Large contextual memory window

- Efficient inference pipeline

- Production-grade scalability

Disadvantages:

- Requires API-based integration

- More complex system architecture

Llama 2 13B

Advantages:

- Easy local deployment

- Open-source flexibility

- Strong general NLP performance

Disadvantages:

- Weak coding specialization

- Limited context window

- Higher scaling inefficiency

Implementation & Usage Workflow

DeepSeek Integration Flow

- API access configuration

- Prompt structuring (code-oriented NLP input)

- Response parsing

- Integration into CI/CD pipelines

Llama 2 Deployment Flow

- Model download

- Local environment setup

- Runtime optimization (GPU/CPU tuning)

- Custom fine-tuning if required

FAQs

A: It significantly outperforms Llama 2 in coding benchmarks and real-world development tasks.

A: Typically, no—it’s optimized for API usage, unlike Llama 2.

A: DeepSeek is cheaper at scale, while Llama 2 is free but costly in infrastructure.

A: DeepSeek-Coder-V2 is among the top choices for coding-focused workflows.

A: Especially for local, offline, or lightweight applications.

Conclusion

The evolution of AI coding models reflects a broader transition in machine learning—from generalized transformer Architectures toward task-specialized, efficiency-optimized neural systems.

DeepSeek-Coder-V2 represents this next generation, offering:

- Advanced semantic reasoning

- High-dimensional context processing

- Economical inference scaling

- Developer-centric optimization

Meanwhile, Llama 2 13B continues to serve as a foundational open-source model for general NLP experimentation and lightweight applications.

Ultimately, your selection should align with system design priorities:

- Performance + scalability → DeepSeek-Coder-V2

- Simplicity + local control → Llama 2 13B

In the 2026 AI ecosystem, the competitive edge belongs to models that merge contextual intelligence with computational efficiency—and DeepSeek leads this transformation.