Introduction

Selecting the ideal AI coding model in 2026 is no longer about identifying a single “best” solution—it’s about aligning the model with your workflow, financial constraints, technical environment, and performance expectations.

This is exactly where the comparison between DeepSeek-Coder 1.3B vs Llama 3.1 becomes both fascinating and strategically important.

On one side, you have a compact, efficient, and highly optimized coding-focused model that can operate locally—even on modest hardware configurations. On the other side, you’re dealing with a large-scale, enterprise-grade artificial intelligence system designed for advanced reasoning, extensive context handling, and sophisticated software engineering workflows.

One emphasizes speed, affordability, and accessibility

The other prioritizes power, intelligence, and scalability

Whether you’re an independent developer, a startup founder building an MVP, or part of a large engineering organization, this in-depth comparison will guide you toward the most suitable choice based on real-world applicability rather than theoretical benchmarks.

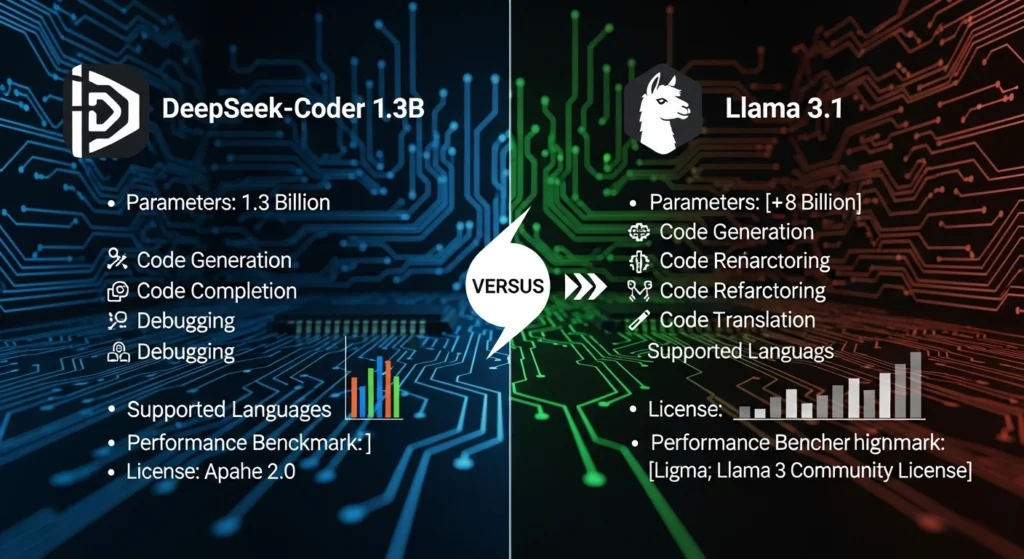

What Is DeepSeek-Coder 1.3B?

Unlike general-purpose AI systems, it is fine-tuned exclusively on code-related datasets, enabling it to deliver efficient and relevant outputs for software development workflows.

Core Characteristics

- Trained on massive-scale code corpora (~2 trillion tokens)

- Designed for:

- Code completion

- Debugging assistance

- Refactoring suggestions

- Syntax correction

- Optimized for low-latency inference

- Compatible with local execution environments

Why Developers Prefer It

Extremely fast response times

Compatible with standard laptops and desktops

Minimal operational cost (near-zero when self-hosted)

Open-source adaptability and flexibility

Practical Scenario

Imagine a freelance developer working remotely. With DeepSeek-Coder, they can:

- Build web applications

- Identify and fix bugs quickly

- Generate boilerplate code

- Avoid recurring API expenses

This fundamentally transforms productivity for solo developers and small teams.

What Is Llama 3.1?

Llama 3.1 represents a next-generation, general-purpose large language model designed for Advanced computational reasoning and enterprise-level AI applications.

Unlike DeepSeek, it is not limited to coding—it functions as a multi-domain intelligence system.

Model Variants

- 8B parameters (lightweight version)

- 70B parameters (balanced performance)

- 405B parameters (high-end, ultra-capable model)

Key Capabilities

- Deep logical reasoning

- Multi-file and repository-level understanding

- Long-context processing

- AI agent orchestration

- Cross-domain knowledge integration

Ideal Use Cases

- Enterprise-grade software systems

- SaaS platforms with AI integration

- Research and development environments

- Complex automation workflows

It’s not just a coding assistant—it’s a complete AI infrastructure component.

DeepSeek-Coder 1.3B vs Llama 3.1: Quick Comparison

| Feature | DeepSeek-Coder 1.3B | Llama 3.1 |

| Model Type | Specialized coding model | General-purpose AI |

| Parameters | 1.3B | 8B – 405B |

| Hardware Needs | Low-end CPU/GPU | High-performance GPUs |

| Speed | Very high | Moderate to lower |

| Cost | Extremely low | Significantly higher |

| Accuracy | Strong (coding-focused) | Very high overall |

| Context Handling | Limited | Advanced |

| Best Use | Local dev, lightweight tasks | Enterprise & complex systems |

Benchmark Comparison

Many comparison articles focus excessively on raw metrics without contextual interpretation.

Here’s a more realistic breakdown:

Llama 3.1

- Achieves approximately 85–90% on HumanEval

- Strong general reasoning ability

- Better suited for complex algorithmic challenges

DeepSeek-Coder

- Optimized specifically for:

- Code generation workflows

- Debugging efficiency

- Slightly lower benchmark scores, but higher real-world efficiency

Key Insight

- Llama = more intelligent and versatile

- DeepSeek = more efficient and practical

For many developers, efficiency outweighs theoretical superiority.

Pricing Comparison

| Model | Cost per 1M Tokens |

| DeepSeek-Coder | ~$0.42 |

| Llama 3.1 | ~$15–$19 |

This represents up to 98% cost reduction.

Implications

- Startups significantly reduce operational expenses

- Independent developers can work at negligible cost

- Scaling AI-powered applications becomes financially viable

This is why DeepSeek dominates search results for affordable AI coding tools.

Hardware Requirements

DeepSeek-Coder 1.3B

Compatible with:

- Standard CPUs

- Systems with ~8GB RAM

- Entry-level GPUs

Llama 3.1

Requires:

- High-VRAM GPUs (24GB–80GB+)

- Dedicated cloud infrastructure

- Advanced deployment pipelines

Summary

- DeepSeek = widely accessible

- Llama = resource-intensive investment

Real Developer Performance

DeepSeek Excels When:

- Rapid code completion is required

- Working in local development environments

- Budget constraints are significant

- Lightweight IDE integrations are preferred

Llama Excels When:

- Managing large-scale codebases

- Performing architectural planning

- Building AI-driven applications

- Requiring deep contextual reasoning

Use Case Breakdown

Best for Beginners

DeepSeek-Coder 1.3B

- Easy to configure

- No expensive infrastructure

- Immediate productivity gains

Best for Startups

DeepSeek-Coder

- Cost-efficient scaling

- Ideal for MVP development

- Rapid iteration cycles

Best for Enterprises

Llama 3.1

- Handles complex systems

- Supports advanced AI workflows

- Enterprise-grade reliability

Best for Research

Llama 3.1

- Superior analytical capabilities

- Multi-domain problem solving

Pros & Cons

DeepSeek-Coder 1.3B

Advantages:

- Extremely affordable

- Local deployment capability

- Fast execution

- Beginner-friendly

Limitations:

- Restricted reasoning depth

- Smaller context window

- Less suitable for large-scale systems

Llama 3.1

Advantages:

- High accuracy and intelligence

- Advanced reasoning capabilities

- Handles complex software ecosystems

- Multi-functional AI system

Limitations:

- High cost

- Demands powerful hardware

- Slower inference speed

Specialized vs General AI

This is the most overlooked distinction in competitor content.

DeepSeek-Coder

Specialist model

Designed exclusively for programming

Llama 3.1

Generalist model

Handles diverse domains

Fundamental Truth

- Specialized models = focused, efficient, optimized

- General models = powerful, flexible, but resource-heavy

Understanding this distinction is crucial for decision-making.

How to Use These AI Tools

Using DeepSeek-Coder

- Install locally via repositories

- Integrate with development environments like VS Code

- Use for:

- Autocomplete

- Debugging

- Refactoring

Using Llama 3.1

- Access through APIs or cloud services

- Integrate into applications

- Use for:

- AI agents

- SaaS platforms

- Complex automation workflows

Content Optimization Tips

If you’re creating AI-related content:

- Focus on problem-solving rather than features

- Use clear, benefit-driven language

- Highlight:

- Speed

- Cost efficiency

- Real-world applications

Practical clarity consistently outperforms hype-driven messaging.

Market Relevance

In regions with strict regulations and cost sensitivity:

- Local deployment enhances data privacy compliance

- Affordable models enable wider adoption

Meanwhile, large organizations prioritize:

- Scalability

- Performance

- Advanced AI capabilities

FAQs

A: DeepSeek is better for fast, affordable coding tasks, while Llama 3.1 excels in complex logic and large-scale development.

A: It’s specifically designed to run on CPUs and low-end systems, making it ideal for personal use.

A: Because it uses significantly larger models and requires powerful infrastructure, it increases compute costs.

A: Especially for small to medium projects, debugging, and rapid prototyping.

A: Startups should choose DeepSeek for cost efficiency unless they need advanced AI capabilities.

Conclusion

DeepSeek-Coder 1.3B and Llama 3.1 are not direct competitors—they are designed with Fundamentally different objectives.

DeepSeek is ideal for:

- Speed

- Cost efficiency

- Local development

Llama 3.1 is suited for:

- Advanced reasoning

- Enterprise-grade systems

- Complex applications

In 2026, the most effective developers are not selecting the most powerful model—they are selecting the most appropriate tool for their specific requirements.