Introduction

Selecting between DeepSeek LLM and Llama 4 Scout in 2026 is far more than a simple technical preference—it is a strategic, high-impact decision that can influence product scalability, operational expenses, engineering efficiency, and long-term innovation capacity.

Whether you are a startup founder in Berlin, a machine learning engineer in London, or an enterprise architect in Paris, choosing the optimal large language model (LLM) can determine how efficiently your systems perform under pressure, how intelligently your applications behave, and how sustainably your infrastructure scales.

Both DeepSeek LLM and Llama 4 Scout represent a new generation of open-weight artificial intelligence models, yet they are designed with fundamentally different priorities and architectural philosophies.

One emphasizes deep reasoning, computational intelligence, and coding excellence, while the other focuses on extreme scalability, massive context handling, and cost-efficient deployment.

This guide is not just another superficial comparison filled with raw numbers and vague claims. Instead, it delivers:

- Real-world benchmark interpretation (not just statistics)

- Cost versus performance analysis

- Developer-centric evaluation

- Practical use-case mapping

- A clear decision-making framework

By the end of this guide, you will not only understand the differences—you will confidently know which model aligns with your specific goals and why.

Quick Comparison

| Feature | DeepSeek LLM | Llama 4 Scout |

| Performance | ⭐⭐⭐⭐⭐ | ⭐⭐⭐ |

| Context Window | 128K–164K | 🔥 Up to 10M tokens |

| Pricing | Medium–High | 💰 Low |

| Coding Ability | 🔥 Excellent | Moderate |

| Multimodal | ❌ No | ✅ Yes |

| Best For | Reasoning, coding | Long-context, scalable apps |

Quick Verdict

- Choose DeepSeek LLM → for intelligence, precision, and coding superiority

- Choose Llama 4 Scout → for scalability, affordability, and large-context processing

What is DeepSeek LLM?

DeepSeek LLM is a high-performance, open-weight large language model engineered to excel in advanced reasoning, algorithmic problem-solving, and software development tasks.

Key Highlights

- Mixture-of-Experts (MoE) architecture

- Approximately 685 billion total parameters

- Around 37 billion active parameters per inference

- Exceptional benchmark performance across reasoning tasks

Why It Matters

Unlike traditional dense models that activate all parameters simultaneously, DeepSeek uses a selective activation mechanism, meaning only a subset of its neural pathways is utilized during each inference.

This results in:

- Faster computational response

- Improved efficiency

- Enhanced reasoning depth

- Reduced unnecessary processing overhead

In simpler terms, DeepSeek behaves like a team of specialized experts, where only the most relevant experts contribute to solving a problem.

Real-World Example

A fintech startup in Amsterdam can leverage DeepSeek LLM to:

- Detect fraudulent transactions with higher precision

- Generate financial algorithms

- Automate complex compliance workflows

This makes DeepSeek particularly valuable in high-stakes, accuracy-driven environments.

What is Llama 4 Scout?

Llama 4 Scout is part of a broader AI ecosystem designed for mass-scale deployment, long-context comprehension, and enterprise-grade applications.

Key Features

- Context window up to 10 million tokens

- Multimodal capabilities (text + image processing)

- Optimized for large-scale infrastructure

- Efficient deployment across distributed systems

Why It Matters

Llama 4 Scout is built for handling extremely large volumes of information in a single pass, eliminating the need for chunking or segmentation.

This allows it to:

- Process entire books or research papers

- Analyze full legal documents

- Maintain long conversational memory

Real-World Example

A legal firm in Germany can use Llama 4 Scout to:

- Analyze complete case files

- Review contracts in a single query

- Extract insights from extensive legal datasets

This dramatically improves productivity and operational efficiency.

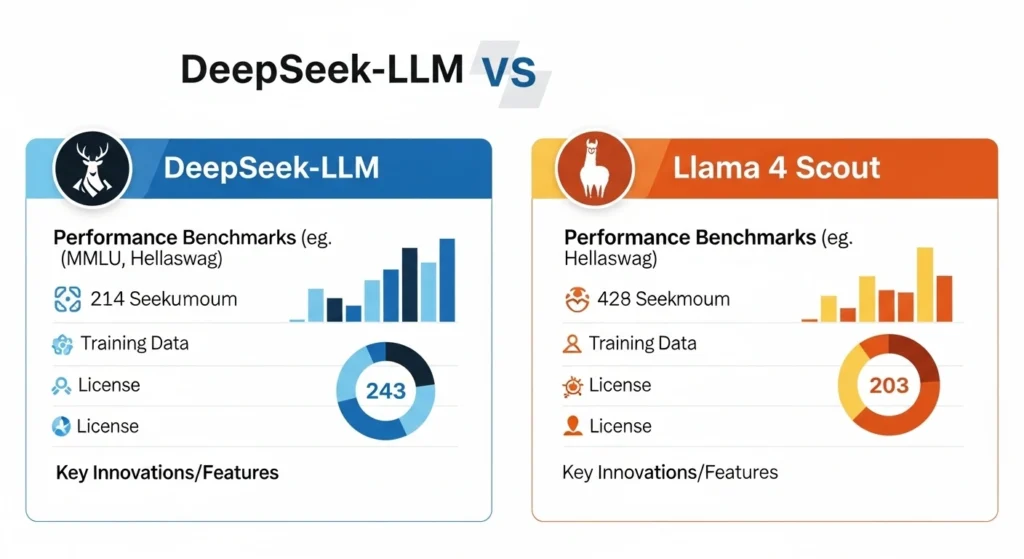

DeepSeek vs Llama 4 Scout: Key Differences

Model Size & Architecture

| Model | Architecture | Parameters |

| DeepSeek | Mixture-of-Experts | ~685B |

| Llama 4 Scout | Dense Model | ~109B |

Insight

- DeepSeek = higher intelligence per task

- Llama = better efficiency at scale

DeepSeek prioritizes precision and depth, while Llama prioritizes breadth and scalability.

Performance & Benchmarks

DeepSeek consistently outperforms Llama 4 Scout in:

- MMLU (general knowledge understanding)

- Coding benchmarks

- Logical reasoning tests

- Problem-solving accuracy

What This Means for You

Using DeepSeek results in:

- More accurate debugging

- Better AI copilots

- Reliable outputs in complex workflows

- Improved code generation

On the other hand, Llama 4 Scout:

- Delivers stable performance

- Maintains consistency across large-scale tasks

- Trades peak intelligence for scalability

Context Window Comparison

| Model | Context Length |

| DeepSeek | ~128K tokens |

| Llama 4 Scout | 🚀 Up to 10M tokens |

Real Impact

Llama 4 Scout enables:

- Full-book analysis

- Entire database processing

- Long-duration conversational memory

This is not just an improvement—it is a paradigm shift in how AI handles information.

Pricing & Cost Efficiency

| Model | Cost Efficiency |

| DeepSeek | Higher cost per token |

| Llama 4 Scout | 3–4× cheaper |

ROI Breakdown

- Startups → Llama offers better financial efficiency

- High-performance applications → DeepSeek delivers superior value

Choosing between them depends on whether you prioritize cost optimization or output quality.

Multimodal Capabilities

| Feature | DeepSeek | Llama 4 Scout |

| Image Input | ❌ | ✅ |

| Vision Tasks | ❌ | ✅ |

Llama 4 Scout is ideal for:

- Visual AI applications

- Content creation platforms

- Image analysis tools

DeepSeek, in contrast, remains text-focused and logic-driven.

Real-World Use Cases Comparison

Coding & Development

Winner: DeepSeek

- Superior accuracy

- Advanced reasoning

- Strong debugging capabilities

Long Context Tasks

Winner: Llama 4 Scout

- Handles millions of tokens

- Ideal for research and legal domains

Enterprise Applications

Winner: Llama 4 Scout

- Lower operational costs

- Scalable infrastructure

- Multimodal flexibility

Research & Analytical Tasks

Winner: DeepSeek

- Better benchmark scores

- Strong analytical capabilities

- Reliable logical reasoning

Pros and Cons

DeepSeek LLM

Pros

- Outstanding performance

- Exceptional coding ability

- Advanced reasoning power

Cons

- Expensive

- No multimodal support

- Limited context compared to Llama

Llama 4 Scout

Pros

- Massive context window

- Cost-effective deployment

- Multimodal functionality

Cons

- Lower benchmark performance

- Less accurate in complex reasoning

How to Use These AI Tools

Step-by-Step Developer Workflow

- Define your use case (coding, analytics, automation)

- Select the appropriate model

- Integrate via API or local deployment

- Optimize prompts for better outputs

- Continuously monitor performance and cost

Pro Tip

Use a hybrid approach:

- DeepSeek for backend intelligence

- Llama for frontend scalability

This maximizes both performance and efficiency.

Tips to Choose the Right Model

- Avoid chasing benchmarks blindly

- Align model choice with workload

- Evaluate long-term operational costs

- Test both models before scaling

Europe Market Relevance

AI adoption across Europe is accelerating rapidly, driven by:

- Regulatory frameworks like GDPR

- Increasing enterprise demand

- Expanding startup ecosystems

Key Insights

- GDPR compliance favors local deployment (DeepSeek advantage)

- Enterprise scalability boosts Llama adoption

- Startups prefer cost-efficient solutions

Countries like Germany, France, and the UK are leading this transformation.

Which One Should You Choose?

DeepSeek if:

- You require high accuracy

- You are building coding tools

- You need strong reasoning capabilities

Llama 4 Scout if:

- You process massive datasets

- You need cost efficiency

- You build scalable AI applications

FAQs

A: DeepSeek is better for performance, coding, and reasoning. However, Llama 4 Scout excels in scalability and long-context tasks.

A: Llama 4 Scout is significantly cheaper, making it ideal for startups and large-scale deployments.

A: DeepSeek is clearly superior for coding tasks due to its advanced reasoning capabilities.

A: It supports up to 10 million tokens, making it perfect for large datasets and documents.

A: Startups should prefer Llama 4 Scout for cost efficiency unless performance is critical.

Conclusion

The comparison between DeepSeek LLM and Llama 4 Scout is not about identifying a single winner—it is about selecting the right tool for the right purpose.

If your priority is precision, reasoning, and coding excellence, DeepSeek stands unmatched. However, if your focus is scalability, affordability, and large-scale data processing, Llama 4 Scout is the smarter option.

For most modern Businesses, the optimal strategy is not to choose one but to leverage both strategically.

- Use DeepSeek where intelligence is critical

- Use Llama where scalability is essential

This hybrid approach ensures maximum efficiency, performance, and long-term success.