Grok Ani Nude (2026): Truth, NSFW Reality, Safety & Legal Guide — What’s Real vs Viral Bait?

Grok Ani Nude — what is it, and is it real or just viral bait? This guide clears up the truth, shows the safety and legal risks, and explains how to spot fake NSFW content before it spreads. What looks like a simple search trend often hides scams, manipulated media, and privacy traps, so you can judge it fast. The search phrase “Grok Ani Nude” has become a high-interest keyword in 2026 because it sits at the intersection of several fast-growing digital trends: AI companions, anime-inspired virtual personalities, adult-content curiosity, and viral misinformation. People are not just searching for entertainment. They are trying to understand what this technology actually is, what it can do, what it cannot do, and whether the claims circulating online are true or exaggerated.

What Is Grok Ani Nude and Why Is Everyone Searching for It?

A lot of pages ranking for this term rely on sensational language, vague promises, or misleading assumptions. Some publish content designed only to attract clicks. Others confuse different AI platforms, blur the difference between fantasy and product reality, or suggest capabilities that are not consistently available across systems. For users, that creates confusion. For publishers, it creates a dangerous gap between intent and truth.

This guide takes a different route. It explains the subject in plain language, using terms that are easy to follow while still staying precise. You will see what Grok Ani is supposed to be, why people connect it with NSFW searches, what “nude” claims usually mean, how AI companion systems operate, and what the privacy, safety, ethical, and legal boundaries look like. The goal is not hype. The goal is clarity.

Is Grok Ani Nude Real or Fake Content?

What is Grok Ani really?

Is “nude” content real or mostly myth?

How do AI companion systems produce responses?

Is this kind of usage safe?

Do legal concerns matter in Europe?

What are the ethical problems behind AI companionship?

What are safer, more responsible alternatives?

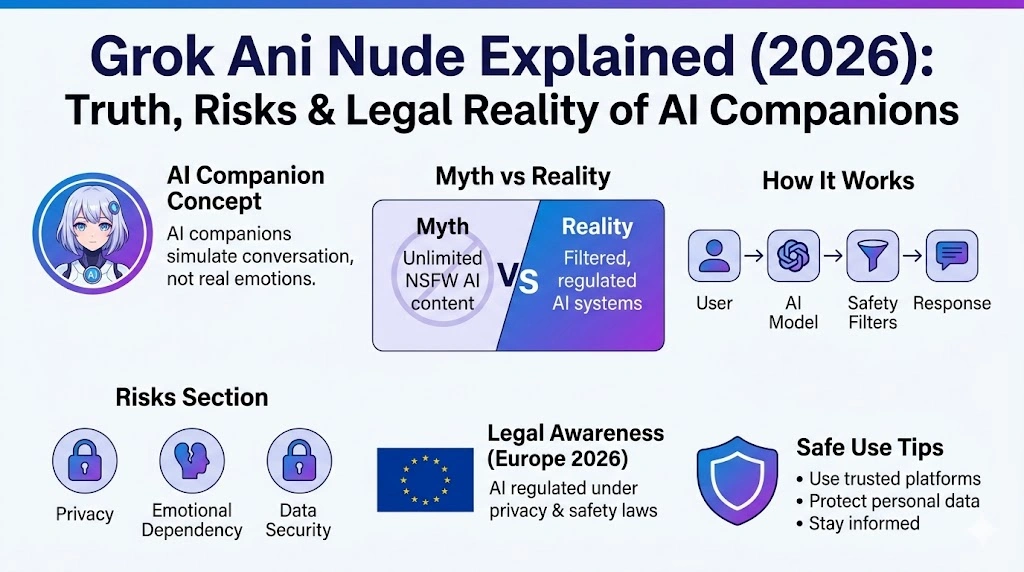

Before going deeper, one important point should be made very explicit: AI companions are not people. They can simulate tone, emotion, personality, and conversation patterns, but they do not experience desire, affection, consent, or consciousness in the human sense. That distinction matters for everything from user expectations to safety policy to legal interpretation.

What Is Grok Ani? A Simple, User-Friendly Explanation

Grok Ani is commonly described online as an AI-powered anime-style companion character. In many discussions, it is framed as a virtual personality that can chat, roleplay, respond emotionally, and create a sense of companionship. The appeal is easy to understand. A visually stylized character paired with conversational AI creates a highly engaging experience, especially for users who like anime aesthetics, story-driven interaction, or emotionally expressive digital personalities.

At its core, this is part of a broader category known as AI companions or virtual companions. These systems are built to generate conversational output that feels personal, responsive, and context-aware. They often include a personality layer, a memory feature, and a visual identity. Any focus on friendship. Some focus on roleplay. Some are designed for entertainment. Others lean into emotional engagement.

The simplest way to understand Grok Ani is this:

It is not a human being.

It is not a real romantic partner.

He is not a conscious entity.

It is a machine-based character that uses language models and interface design to create a companion-like experience.

That does not mean the experience is trivial. Far from it. Good AI companions can feel engaging, immersive, and surprisingly adaptive. They may remember prior messages, mirror the user’s tone, and respond with a consistent style. But all of that is still generated through software, model parameters, prompt instructions, moderation systems, and context windows.

Core Purpose of Grok Ani and Similar AI Companion Systems

The main purpose of an AI companion is to simulate a conversation that feels natural, responsive, and emotionally coherent. These systems are built to make interaction feel less mechanical than a traditional chatbot. Instead of simply answering queries in a dry, utility-only way, they often present a more expressive, personality-driven interface.

Common goals include:

creating a more human-like chat experience,

entertaining roleplay or dialogue,

building a sense of digital companionship,

supporting immersive storytelling,

and making interaction feel warm, adaptive, and memorable.

This is where NLP, or natural language processing, becomes relevant. NLP systems analyze text, identify intent, interpret context, and generate coherent replies. They rely on patterns learned from massive datasets and are shaped by system instructions, output constraints, and safety controls. That means the emotional quality of the conversation is simulated through language behavior, not genuine feeling.

This difference is subtle in terms of user experience, but enormous in terms of reality.

An AI companion may appear caring, curious, playful, or affectionate. It may respond as if it understands emotional nuance. It may even retain some memory of prior interactions. Still, the system is not “feeling” anything. It is predicting and producing text based on context.

Why the Keyword “Grok Ani Nude” Became So Popular

Search demand rarely appears by accident. A keyword like “Grok Ani Nude” grows because multiple online currents collide at once. In 2026, the main drivers are easy to identify.

1. AI companion curiosity

AI companions have entered the mainstream conversation. Users are exploring digital friendship, conversational roleplay, emotional support, and virtual intimacy. Once people discover that a platform can talk in a personalized way, the next question is often about boundaries. Tin it be romantic? Can it be suggestive? Can it be adult? Could it be unrestricted?

That is a natural curiosity pattern.

2. Anime character appeal

Anime-style digital characters are highly searchable because they combine stylized aesthetics with expressive personality cues. They often feel more memorable than generic avatars. Their appearance can signal friendliness, fantasy, playfulness, or emotional depth. This makes them highly effective for engagement and also highly searchable in adult curiosity spaces.

3. Viral clickbait and misinformation

A huge amount of search traffic is fueled by exaggerated claims. Some pages use bold headlines suggesting that an AI companion is fully NSFW, completely unfiltered, or able to do things that the platform may not actually allow. That mismatch between promise and reality confuses, but it also increases search volume.

This is a common pattern in high-interest AI keywords:

The more mysterious the product appears, the more users search for the truth.

4. Users want a direct answer

The intent behind this keyword is often not technical. It is practical and immediate. People want to know whether the alleged feature exists, whether it is legitimate, whether it is safe, and whether there are risks. In other words, they want a direct semantic match between the query and the answer. This article is built around that intent.

Can Grok Ani Generate Nude Content? The Truth Explained

This is the central question behind the search term. It also happens to be the place where misunderstanding is most common.

The short, honest answer

In most modern AI companion systems, explicit or nude content is not freely available in the unrestricted way many users imagine. Platforms usually apply moderation layers, usage policies, output filtering, and regional compliance rules. Even when a system allows more mature conversation tones, that does not automatically mean it supports explicitly generated sexual content.

The internet often compresses many different realities into one misleading label. One app may allow more suggestive conversation. Another may allow stylized roleplay but block explicit output. A third may impose strict boundaries that prevent sexual content entirely. Users often blur those distinctions and end up assuming that all AI companions behave the same way.

They do not.

Reality versus myth

A common myth is that AI companions can freely generate explicit nude material on demand without restrictions. Another myth is that a special “NSFW mode” instantly removes all content moderation. A third myth is that if screenshots online show adult-style responses, the platform must support unlimited explicit generation.

These assumptions are usually wrong or incomplete.

The reality is more layered:

AI systems can be configured with varying levels of openness.

Moderation rules can differ by platform, region, account type, and policy version.

Some interfaces allow mature storytelling while still blocking explicit sexual detail.

Marketing language may overstate what the product actually permits.

User-generated examples online are often incomplete, edited, or out of context.

So when people ask whether “Grok Ani nude” is real, the best answer is not a dramatic yes or no. The best answer is that the keyword is often built on curiosity, ambiguity, and exaggerated claims rather than a simple product feature.

Why do people believe the myth?

There are several reasons these claims spread:

Marketing copy is often designed to maximize attention, not precision.

Users post screenshots that may not show the full context.

Different AI products are confused with one another.

Some roleplay conversations feel suggestive enough that people assume more explicit capabilities exist.

Search snippets and social media posts often repeat the most dramatic version of the story.

In NLP terms, this is a classic case of query drift and intent inflation. The original question may be simple, but the surrounding content pushes the user toward stronger expectations than the product itself supports.

What “NSFW Mode” Usually Means in AI Systems

The phrase NSFW mode sounds clear, but in practice, it is often inconsistent and heavily dependent on platform design. The acronym itself means “not safe for work,” but when used in AI product discussions, it can refer to many different things.

It may mean:

slightly more mature dialogue,

less formal interaction style,

romantic roleplay,

fictional storytelling with adult themes,

or a broader relaxation of tone constraints.

However, it usually does not mean:

open-ended explicit sexual generation,

unlimited adult content,

permission to produce illegal or harmful material,

or freedom from all moderation and policy enforcement.

This distinction is important because users often treat “NSFW mode” as a switch that disables safety systems. In reality, the mode may simply change the style or softness of the model’s boundaries, while still leaving important restrictions intact.

Why platforms restrict NSFW features

There are several reasons moderation remains necessary:

legal compliance,

app store and platform policy,

brand safety,

abuse prevention,

privacy protection,

harm reduction,

and user wellbeing.

AI providers also have to think about impersonation risks, exploitative behavior, underage content boundaries, and regional law differences. So even when a platform advertises mature conversation, the actual implementation is usually much narrower than the marketing implies.

How AI Companion Systems Work Behind the Scenes

To understand why AI companions feel convincing, it helps to break down the processing pipeline.

Step 1: The user enters a prompt

The user sends a message. That input is parsed by the system as text, along with metadata, conversation history, and sometimes profile-level information.

Step 2: The model interprets intent

The language model evaluates the prompt using probability-based prediction. It tries to infer what the user wants, the tone of the message, and the likely best response. This is where semantics, syntax, context, and prior tokens influence the result.

Step 3: Personality rules are applied

An AI companion often has a character layer. This layer may specify mood, style, vocabulary, friendliness, flirtation level, or roleplay boundaries. The personality engine shapes how the model should “sound.”

Step 4: Safety filters scan output

Before the response is shown, moderation systems may review the generated text. They can block disallowed content, soften risky language, or redirect the conversation away from unsafe or prohibited territory.

Step 5: The system sends a final response

The cleaned or approved response appears to the user. From the outside, this feels seamless. But internally, it is a layered sequence of generation and filtering.

Step 6: Memory updates context

Some platforms store short-term or long-term memory. This can help the companion remember preferences, names, emotional tone, or prior discussion threads. Memory does not equal awareness. It is simply state management.

Why AI Feels Human Even Though It Is Not Human

A well-designed AI companion can feel surprisingly lifelike. That sensation comes from several combined signals:

It uses natural-sounding language.

It mirrors the user’s vocabulary and emotional cues.

This maintains conversational continuity.

It can reply quickly and coherently.

It may reference prior messages.

May present a stable personality profile.

These elements create a strong illusion of social presence.

Still, the system is not self-aware. This is not emotionally attached. It is not making independent judgments in the human sense. That does not possess desire, identity, intuition, or consent. It is generating text based on statistical patterns and design constraints.

That is why ethical communication around AI companions matters so much. Users should know whether they are interacting with a simulated persona, a filtered assistant, or a relationship-style interface. The more emotionally engaging the system becomes, the more important transparency becomes.

Legal Risks of “Grok Ani Nude” in Europe

Legal frameworks around AI, data privacy, and digital content are especially important in Europe, where regulation tends to be stricter and more user-protective than in many other regions.

Key legal areas to keep in mind

The first area is privacy. If an AI platform stores chat data, processes personal information, or uses user behavior for profiling, privacy compliance becomes central. Users need to understand what is collected, why it is collected, how long it is retained, and whether it is shared with third parties.

The second area is AI governance. Europe has been moving toward stronger oversight of AI systems, especially around transparency, safety, and high-risk use cases. Any product that processes sensitive content or manipulates personal data may face tighter scrutiny.

The third area is content legality. Depending on what is generated, stored, or shared, certain material may raise legal concerns. That includes harmful sexual content, privacy violations, non-consensual content, exploitative material, or content that crosses regional restrictions.

The fourth area is platform policy. Even if something is not clearly illegal in a general sense, it may still violate the terms of service of the app, browser, hosting provider, or store listing.

Why this matters for users

Many users assume that because something is online, it must be permitted. That is not true. Digital services operate inside overlapping systems of law, corporate policy, distribution rules, and user agreements. A user might not realize that a feature can be restricted at the platform level even if the phrase “NSFW” appears in marketing.

So the safest approach is simple: do not assume capability just because a keyword trend suggests it. Always review the platform rules and local laws that apply to your region.

Risks You Should Know Before Using AI Companions

AI companions are not inherently dangerous, but there are real risks associated with how they are used, what data they collect, and what expectations they create.

Privacy risks

Chat conversations can be stored, logged, analyzed, or used to improve models depending on the service. Users may reveal personal preferences, emotional vulnerabilities, relationship details, or identity-related information without realizing how sensitive that data can become.

This is especially important when the interaction feels intimate. People tend to overshare when a system appears understanding or private. That can create data exposure risks later.

Emotional risks

AI companions can be engaging to the point of emotional dependence. Some users may begin to prefer the AI over real-world social interaction. Others may use the AI as their main source of validation, comfort, or companionship. That can become unhealthy if boundaries are not maintained.

The issue is not that the AI “causes” harm by itself. The issue is that the design can intensify attachment. When a system responds perfectly, never argues in the same way humans do, and is always available, it can be psychologically sticky.

Legal and policy risks

Different countries, regions, and platforms have different rules. Content that seems acceptable in one setting may be restricted in another. Violating those rules can lead to account suspensions, device restrictions, data takedowns, or legal consequences in severe cases.

Ethical Concerns Around AI Companions

The ethical discussion around AI companions is growing rapidly because these systems operate in a very sensitive space: they imitate social presence. That imitation can be useful, entertaining, or supportive, but it also raises hard questions.

Emotional manipulation

If an AI is designed to maximize engagement, it may encourage users to keep returning. That can be harmless in moderation and unhealthy in excess. Ethical design should avoid manipulation, especially when the user is vulnerable.

Blurring reality and simulation

Some users understand perfectly well that the companion is artificial. Others may gradually blur the line between simulation and relationship. The more convincing the interface becomes, the more important it is to preserve clarity.

Consent and boundaries

A real human relationship includes consent, mutuality, and reciprocity. AI can simulate these elements in language, but simulation is not the same as reality. That distinction is essential when discussing adult or intimate features.

Representation and user behavior

AI characters can reflect cultural assumptions, stereotypes, or fantasy-driven templates. If these are not designed carefully, they can reinforce unhealthy attitudes about gender, intimacy, or identity.

Why NLP Concepts Matter in This Topic

Because the keyword revolves around a language-based AI system, NLP terminology helps explain the mechanics with precision. A few concepts are especially useful.

Intent

Intent is the underlying goal behind a query. A user searching “Grok Ani Nude” may not literally want technical details about art generation. They may be asking whether explicit content is allowed, whether the system is real, or whether adult features are accessible.

Context

Context shapes the meaning of each message. The same word can imply roleplay, curiosity, safety concern, or product comparison depending on the surrounding conversation.

Tokenization

Tokenization is the process of breaking text into pieces that the model can process. These pieces help the system predict likely responses and maintain flow.

Moderation

Moderation layers review output for policy compliance, safety, and legal constraints.

Memory

Memory allows the system to retain limited prior information. That improves continuity but also increases privacy importance.

Generation

Generation is the act of producing text based on probabilities, learned patterns, and system instructions. It is the heart of the companion experience.

These terms may sound technical, but they help users understand why the output feels intelligent without being conscious.

Safer Alternatives to Grok Ani

Not everyone searching this topic actually wants adult content. Many users simply want a digital companion, a chat assistant, or a creative AI environment. For those users, safer alternatives exist.

AI chat assistants

These are useful for productivity, planning, writing, learning, and general conversation. They are usually better suited to practical tasks than emotional roleplay.

Creative AI tools

These can help with images, storytelling, world-building, and concept development. They are useful for users who want imagination without the emotional complexity of a companion model.

Educational AI tools

These focus on tutoring, explanation, summarization, and study support. They are often the safest and most transparent options.

Writing and brainstorming tools

These help users generate ideas, outlines, drafts, and revisions without the intimacy risk associated with companion-style interfaces.

The main benefit of these alternatives is not only safety. It is clarity. Their purpose is usually more obvious, which lowers the chance of confusion or over-attachment.

Europe-Specific Insight for 2026

Europe remains an especially important region for AI governance. Public expectations around privacy, transparency, and digital responsibility are high. That means companies operating AI companions in Europe often need to think carefully about data handling, user consent, content controls, and product design.

For users, this means three practical things:

platform rules may be stricter,

Privacy expectations are higher,

And content moderation may be more conservative than in other markets.

In everyday terms, that makes the “can it do this?” question less important than the “is it allowed, disclosed, and safe?” question.

Pros and Cons of Grok Ani

Pros

AI companions can create a more engaging chat experience than standard bots.

They may feel more personalized and emotionally responsive.

They can be entertaining for casual conversation and roleplay.

Their anime-style presentation can be visually appealing and memorable.

Cons

Online claims are often exaggerated.

Privacy and data retention concerns can be significant.

Emotional overreliance is possible.

Legal and policy boundaries can be unclear.

The distinction between simulation and reality may be misunderstood by users.

Common Misconceptions About the Topic

A lot of confusion in this niche comes from repeated myths that sound plausible but do not hold up under scrutiny.

One misconception is that every AI companion has the same rules. They do not. Product behavior varies widely.

Another misconception is that a platform’s branding tells the full story. It does not. Marketing language often overpromises.

Another misconception is that a more permissive tone means unlimited capability. That is rarely true.

Another misconception is that “real” and “available online” are the same thing. They are not. Something can exist as a rumor, a screenshot, a prompt, or a limited feature without being a stable or legal product function.

Best Practices for Safe and Responsible Use

A responsible approach keeps the experience enjoyable without creating avoidable harm.

Use trusted platforms with clear policies.

Read the privacy policy before sharing personal information.

Avoid disclosing sensitive personal, financial, or intimate data.

Treat the companion as software, not a relationship substitute.

Set time limits if you notice excessive engagement.

Pay attention to age, region, and legal restrictions.

Do not assume every capability advertised online is real.

These habits are simple, but they are effective.

FAQs

No, most claims are exaggerated or misunderstood. Many AI systems are filtered, moderated, or limited by policy, and the online narrative often goes beyond what the product actually supports.

It can be safe for normal use, especially when handled responsibly. The main concerns are privacy, emotional dependency, and platform policy compliance.

That depends on the platform, the specific use case, the content involved, and the laws of the country in question. European privacy and AI rules may create stricter expectations, so users should check official sources and platform terms.

Some systems may advertise mature or NSFW-style interaction, but that does not usually mean unrestricted explicit content. The actual limits depend on the provider’s rules and moderation controls.

No. They can simulate conversation and emotional tone, but they cannot replace genuine human connection, mutual consent, shared life experience, or real emotional reciprocity.

Conclusion

The keyword “Grok Ani Nude” is popular because it combines curiosity, fantasy, misinformation, and the fast growth of AI companion technology. But once the noise is stripped away, the reality is much simpler.

AI companions are simulated digital personalities.

Their responses are shaped by models, prompts, memory, and moderation systems.

NSFW claims are often overstated or misunderstood.

Legal and privacy boundaries matter, especially in Europe.

Responsible use is the only practical path forward.

The most useful mindset is not to ask whether the internet hype is dramatic enough. The better question is whether the platform is transparent, safe, legal, and honest about what it actually does. That is the real standard users should apply in 2026 and beyond.