Introduction

The landscape of artificial intelligence (AI) and natural language processing is evolving at an unprecedented pace. Every year, Advanced models achieve enhanced cognitive capabilities, delivering more nuanced, contextually aware, and accurate responses. Within this dynamic environment, two language models from Anthropic — Claude 2 and Claude 3 Haiku — have gained widespread adoption among developers, enterprises, and content creators. These models are leveraged to power intelligent chatbots, coding assistants, research tools, document analysis platforms, and creative applications.

Yet, a critical question persists for AI practitioners and decision-makers alike:

Is Claude 3 Haiku genuinely superior to Claude 2?

This comprehensive guide will examine all facets of these models — from computational efficiency and response latency to contextual understanding, pricing models, real-world applications, and NLP benchmarks. By the conclusion of this analysis, you will be equipped to make an informed choice tailored to your 2026 use cases.

What Is Claude AI?

Claude AI represents a suite of transformer-based large language models (LLMs) developed by Anthropic, a company dedicated to building AI with safety and alignment in mind. Claude competes with other leading LLMs, such as ChatGPT, Google Gemini, and Grok AI, providing robust natural language understanding, reasoning, and generation capabilities.

These models are commonly employed for:

- Text generation and content composition: Drafting documents, blog posts, or structured text.

- Question-answering systems: From FAQs to complex knowledge queries.

- Programmatic coding assistance: Autocomplete, debugging, test case generation, and refactoring.

- Document summarization and analysis: Condensing lengthy reports or academic papers.

- Enterprise-level conversational AI: Customer support, automated agents, and workflow automation.

A defining characteristic of Claude models is their extended context window, enabling the processing of vast amounts of textual input simultaneously, an essential feature for deep NLP reasoning and long-document comprehension.

Evolution of Claude Models

To understand the differences between Claude 2 and Claude 3 Haiku, it’s important to contextualize the evolution of Claude AI.

Claude 2 (2023)

Released in 2023, Claude 2 represented a substantial architectural and functional upgrade over its predecessor. Its enhancements included:

Up to a 100K token context window, enabling the handling of extremely long text sequences.

Enhanced logical reasoning and inference capabilities.

Improved document analysis, facilitating multi-page summaries and insights.

Higher fidelity responses, with reduced ambiguity in text generation.

However, Claude 2 exhibited certain limitations:

Lack of multimodal support, restricting input to text only.

Relatively high operational costs for large-scale deployment.

Slower throughput compared to more recent architectures.

Claude 2.1 (2023 Upgrade)

Claude 2.1 addressed several issues in Claude 2 by refining its algorithms and model parameters:

Lower hallucination rates, producing fewer factually inaccurate responses.

Improved accuracy in reasoning and content generation.

Smoother conversational flow, making it more suitable for extended dialogue.

Despite these improvements, Claude 2.1 maintained the same Underlying architecture, limiting its speed and efficiency gains.

Claude 3 Family (2024+)

In 2024, Anthropic launched the Claude 3 series, featuring an upgraded architecture optimized for both performance and cost-efficiency. The family includes three primary variants:

| Model | Primary Use Case | Strengths |

| Opus | Deep reasoning | Superior reasoning and analytical capabilities |

| Sonnet | Balanced tasks | Well-rounded performance for general NLP |

| Haiku | High-speed, low-cost | Optimized for latency, throughput, and efficiency |

What Is Claude 3 Haiku?

Claude 3 Haiku is the fastest and most cost-efficient model in the Claude 3 family. It was explicitly designed for:

Rapid response generation, suitable for real-time systems.

High throughput in batch or live queries.

Cost-effective operations, lowering the barrier to extensive use.

Multimodal input processing, handling both text and images seamlessly.

This combination makes Claude 3 Haiku ideal for:

- Customer service chatbots that require instant responses.

- Content creation and automation platforms.

- Real-time AI agents for research or enterprise workflows.

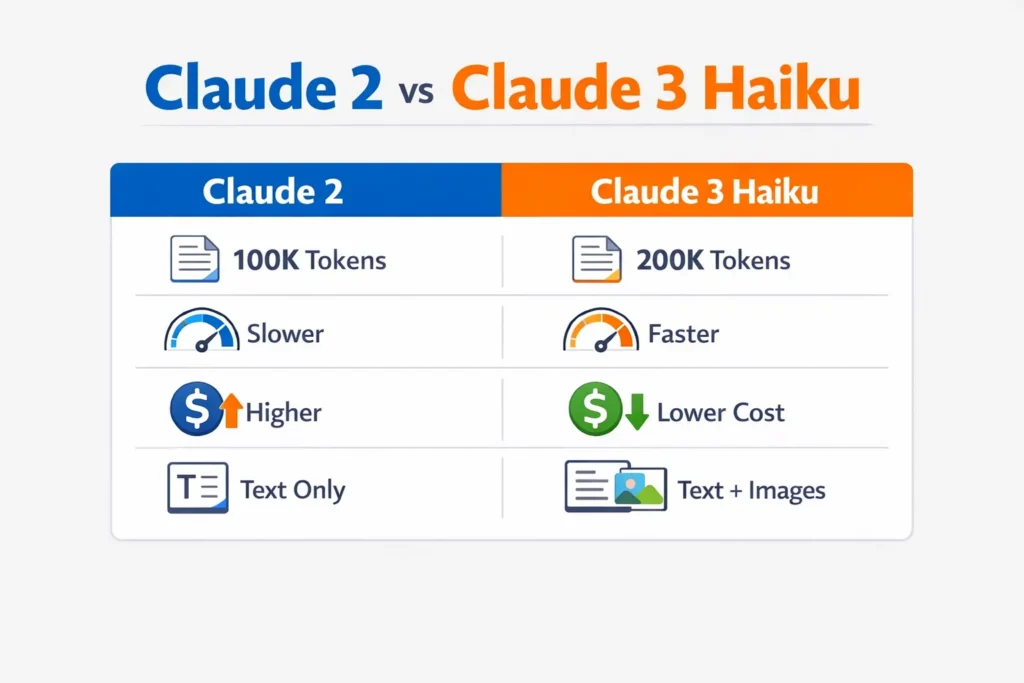

Claude 2 vs Claude 3 Haiku – Key Differences

A comparative overview highlights the main differences between these models:

| Feature | Claude 2 | Claude 3 Haiku |

| Release Year | 2023 | 2024 |

| Context Window | 100K tokens | 200K tokens |

| Multimodal Support | ❌ No | ✅ Yes (Text + Image) |

| Speed | Moderate | Very Fast |

| Cost Efficiency | Medium | High |

| Optimal Use Case | In-depth reasoning | Real-time applications |

Why Context Window Matters

The context window refers to the model’s ability to simultaneously process input tokens without losing earlier information.

- Claude 2 supports up to 100K tokens, sufficient for long documents, but may struggle with Extremely large datasets.

- Claude 3 Haiku doubles this capacity to 200K tokens, enabling seamless comprehension of voluminous texts, such as:

Legal contracts and case law

Scientific research papers

Large codebases

Full-length novels and manuals

For NLP-intensive workflows, this expanded context is transformative, enhancing coherence, entity tracking, and cross-references in generated outputs.

Speed and Latency: Real Performance

A defining advantage of Claude 3 Haiku lies in its reduced latency. Faster models enable:

- Real-time conversational agents

- Interactive coding assistants

- Voice-enabled AI tools

- Live enterprise applications

Performance comparison:

- Claude 2: Moderate response time, higher compute consumption.

- Claude 3 Haiku: Rapid responses, lower computational overhead, scalable for high-volume queries.

This speed advantage is critical in scenarios requiring instant feedback or high-frequency transactions.

Pricing Differences

Cost efficiency is crucial for enterprises and developers aiming to scale AI operations. A comparative summary:

| Model | Input Cost | Output Cost |

| Claude 2 | High | High |

| Claude 3 Haiku | Low | Low |

Haiku’s optimized architecture reduces operational expenses while maintaining competitive NLP performance, making it a practical choice for budget-conscious deployments.

Benchmarks: Which Is Smarter?

Benchmarks provide Quantifiable measures of AI reasoning, comprehension, and task execution.

- MMLU (Massive Multitask Language Understanding) – General Reasoning Test:

| Model | MMLU Score |

| Claude 2 | 78.5 |

| Claude 3 Haiku | 76.7 |

Interpretation:

- Claude 2 slightly outperforms Haiku in deep reasoning tasks.

- Haiku remains highly competitive, especially for pragmatic, real-time NLP applications.

Multimodal Support

Claude 3 Haiku can handle text and image inputs concurrently, unlocking applications such as:

✔ Image description generation

✔ Visual question answering

✔ Diagram interpretation

✔ Enhanced multimodal research

In contrast, Claude 2 is restricted to textual input, limiting its versatility in multimodal NLP pipelines.

Claude 2.1 vs Claude 3 Haiku

When comparing the improved Claude 2.1 with Haiku:

| Feature | Claude 2.1 | Claude 3 Haiku |

| Context Window | ~200K | 200K |

| Multimodal | ❌ | ✅ Yes |

| Speed | Moderate | Very Fast |

| Cost | Higher | Lower |

| Output Quality | Refined | Optimized for efficiency |

Even with Claude 2.1’s refinement, Haiku surpasses it in speed, scalability, and cost efficiency, making it ideal for real-time NLP solutions.

Real-World Performance

Coding Assistance

AI-powered coding relies on predictive NLP, syntax understanding, and error detection.

- Claude 2: Provides detailed Explanations and reasoning about code structure.

- Claude 3 Haiku: Generates quick suggestions, better suited for live coding environments and interactive IDEs.

Writing and Content Generation

- Claude 2: Excels at essays, in-depth analysis, and analytical writing.

- Claude 3 Haiku: Optimized for rapid content creation, short-form content, and high-throughput drafting workflows.

Research & Document Analysis

Both models are effective at large-scale text processing:

- Claude 2: Superior for extracting deep insights from complex materials.

- Claude 3 Haiku: Optimized for speed and overview, suitable for summarizing multiple documents rapidly.

Enterprise Automation

Use cases include:

- Email and workflow automation

- Customer support AI

- Knowledge base maintenance

- Ticketing and operational systems

Here, Haiku’s low cost and high throughput enable organizations to scale volume-intensive NLP operations.

Summary – When to Choose Which Model

Claude 2 is ideal for:

- Complex reasoning

- Detailed, analytical content

- Deep research and document analysis

Claude 3 Haiku is ideal for:

- Real-time applications

- Rapid responses

- Large-scale deployments

- Cost-conscious operations

FAQ

A: Haiku excels in speed, cost-efficiency, and multimodal processing. Claude 2 is marginally better for deep reasoning.

A: Haiku allows multimodal prompts combining text and images.

A: Claude 3 Haiku is superior for real-time coding assistance.

A: It is designed to minimize operational costs.

A: It supports up to 200K tokens, twice that of Claude 2.

Conclusion

In 2026, both Claude 2 and Claude 3 Haiku represent powerful NLP engines, but they serve slightly different purposes:

- Claude 2 is best suited for deep analytical reasoning, lengthy research tasks, and situations requiring high-fidelity, detailed text generation. Its larger reasoning accuracy makes it valuable for academics, researchers, and enterprise analysts.

- Claude 3 Haiku, on the other hand, is Optimized for speed, scalability, and multimodal applications. It excels in real-time AI systems, live coding assistance, customer support, and high-volume content generation, while being cost-efficient and capable of handling large documents or multimodal inputs.

Ultimately, the choice depends on your priorities: deep analytical depth versus fast, efficient, and multimodal capabilities. For most modern enterprises and real-time NLP applications, Claude 3 Haiku provides the best balance of performance, speed, and cost-effectiveness.