Introduction

The artificial intelligence ecosystem has undergone rapid transformation over the past few years, yet one comparison remains surprisingly neglected: DeepSeek V3.1 vs Llama 1 Series. While most digital publications and comparison blogs concentrate heavily on newer iterations like Llama 2 or Llama 3, very few provide a historically grounded, architecture-first evaluation of Llama 1 against modern models. This gap creates a powerful opportunity for deeper understanding and strategic decision-making.

For developers, SaaS founders, AI engineers, and enterprise stakeholders across Europe and global markets, selecting the right large language model (LLM) is no longer just about raw accuracy or leaderboard scores. Instead, it involves a multidimensional evaluation including computational efficiency, deployment adaptability, cost optimization, latency, scalability, and real-world performance under production conditions.

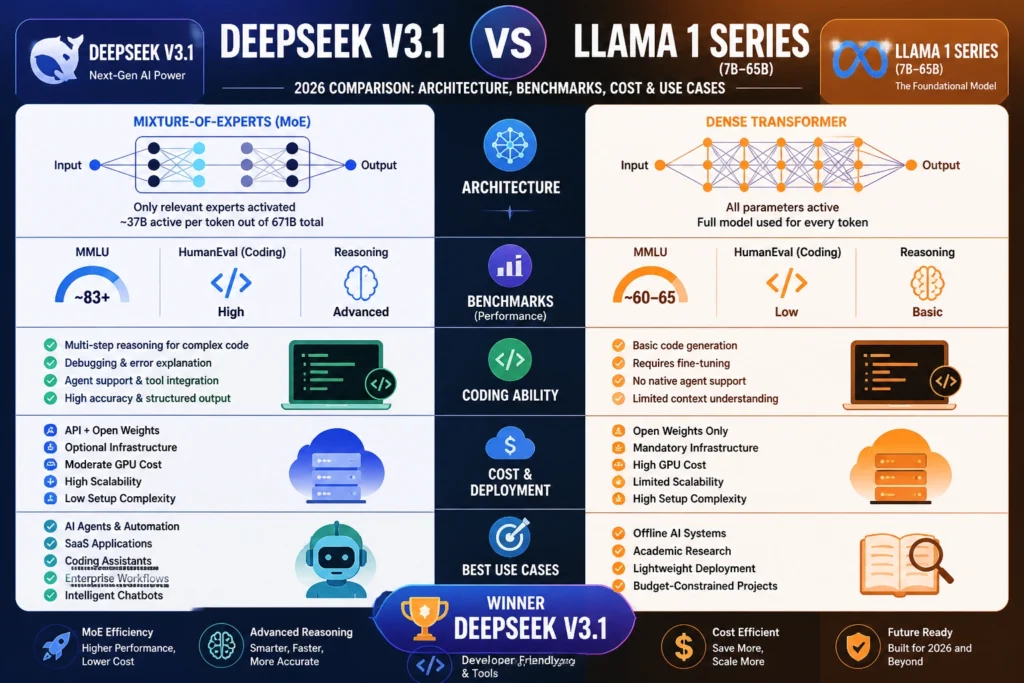

This comprehensive guide delivers a granular, technically enriched breakdown of both models. We explore the fundamental contrast between Mixture-of-Experts (MoE) architectures and dense transformer frameworks, interpret benchmarks beyond surface-level numbers, and evaluate coding capabilities, infrastructure requirements, and deployment economics.

By the conclusion of this guide, you will clearly understand:

- Which model aligns better with startup vs enterprise environments

- Which system delivers superior cost efficiency in 2026

- Whether DeepSeek V3.1 truly surpasses Llama 1 in practical scenarios

- How to select the optimal model based on your specific operational requirements

Let’s begin an in-depth exploration.

What is DeepSeek V3.1?

DeepSeek V3.1 represents a next-generation open-weight large language model engineered for high computational efficiency, advanced reasoning capability, and scalable deployment.

Key Features

- Mixture-of-Experts (MoE) architecture

- Approximately 671 billion total parameters with ~37 billion active per token

- Hybrid reasoning modes (analytical vs direct response)

- Strong programming and code-generation capabilities

- Native tool integration and API compatibility

- Long-context understanding and extended token processing

Why It Matters

DeepSeek V3.1 introduces a paradigm shift in LLM design by decoupling performance from linear cost scaling. Instead of activating the entire neural network during inference, it selectively engages only relevant expert subnetworks. This selective activation mechanism dramatically enhances efficiency while maintaining high-level reasoning accuracy.

In simpler terms, DeepSeek V3.1 achieves greater intelligence per computational unit, making it significantly more efficient for modern AI workloads.

What is the Llama 1 Series?

Llama 1 is a family of dense transformer-based language models introduced in 2023, designed primarily for research, experimentation, and controlled deployment scenarios.

Model Sizes

- 7B parameters

- 13B parameters

- 33B parameters

- 65B parameters

Key Characteristics

- Dense transformer architecture

- Full parameter activation during inference

- Initially released with restricted commercial licensing

- Requires manual optimization for production environments

- Designed for reproducibility and academic exploration

Why It Still Matters in 2026

Despite being outdated compared to modern architectures, Llama 1 remains relevant due to its lightweight design and accessibility. It remains particularly useful for:

- Offline AI implementations

- Academic experimentation

- Benchmark baselines for comparative research

- Resource-constrained environments

In essence, Llama 1 serves as a foundational reference point in the evolution of LLMs.

Architecture Comparison: MoE vs Dense Transformer

This is the most critical technical distinction between DeepSeek V3.1 and Llama 1.

| Feature | DeepSeek V3.1 | Llama 1 |

| Architecture | Mixture-of-Experts (MoE) | Dense Transformer |

| Parameter Utilization | Sparse activation | Full activation |

| Computational Efficiency | High | Low |

| Scalability | Highly scalable | Limited scalability |

| Inference Cost | Reduced | Elevated |

Deep Insight

- MoE (Mixture-of-Experts) operates through selective computation. Only relevant neural pathways are activated for each token, enabling optimized performance.

- Dense Transformers rely on brute-force processing, activating all parameters regardless of task complexity.

This difference fundamentally alters how resources are consumed.

Verdict: DeepSeek V3.1 significantly outperforms in efficiency, scalability, and resource utilization

Benchmark Performance

Many comparison articles present benchmark numbers without context, leading to misleading conclusions. Let’s interpret them properly.

Key Benchmarks

| Benchmark | DeepSeek V3.1 | Llama 1 |

| MMLU | ~83+ | ~60–65 |

| HumanEval (Coding) | High | Low |

| Reasoning | Advanced | Basic |

What These Benchmarks Actually Represent

MMLU (Massive Multitask Language Understanding)

This evaluates general intelligence across diverse academic disciplines, including mathematics, law, medicine, and the humanities.

DeepSeek demonstrates substantial improvement, indicating broader cognitive capability.

HumanEval (Coding Benchmark)

Measures the ability to generate correct and functional code snippets.

DeepSeek excels due to structured Reasoning and multi-step problem-solving abilities.

Real-World Interpretation

- DeepSeek V3.1 = production-ready, enterprise-grade AI system

- Llama 1 = experimental baseline model for controlled testing

Benchmarks alone do not define usability—but they strongly indicate practical readiness.

Coding & Developer Experience

DeepSeek V3.1

- Multi-step reasoning for programming tasks

- Automated debugging assistance

- Agent-based workflows

- API and plugin integrations

- Context-aware code generation

Llama 1

- Basic code completion

- Requires extensive fine-tuning

- No native agent framework

- Limited contextual awareness

Verdict: DeepSeek V3.1 dominates in developer productivity and engineering workflows

Cost & Deployment Analysis

Often, the decisive factor in model selection.

Cost Breakdown

| Factor | DeepSeek V3.1 | Llama 1 |

| Access | API + Open Weights | Open Weights |

| Infrastructure Requirement | Optional | Mandatory |

| GPU Cost | Moderate | High |

| Scalability Cost | Efficient | Expensive |

| Setup Complexity | Low | High |

Real Insight for European Teams

Organizations across Germany, France, and the UK increasingly favor API-first architectures due to:

- Reduced infrastructure burden

- Faster deployment cycles

- Lower maintenance overhead

Strategic Recommendation

- Small teams → DeepSeek API

- Large enterprises → Hybrid deployment (API + self-hosted models)

Real-World Use Cases

DeepSeek V3.1

- AI-powered autonomous agents

- SaaS automation pipelines

- Coding assistants and copilots

- Enterprise workflow orchestration

- Intelligent chatbots with reasoning capability

Llama 1

- Offline AI systems

- Academic or experimental research

- Lightweight deployment environments

- Cost-sensitive projects with existing infrastructure

Head-to-Head Comparison Table

| Feature | DeepSeek V3.1 | Llama 1 |

| Architecture | MoE | Dense |

| Reasoning | Advanced | Basic |

| Coding | High-level | Limited |

| Efficiency | High | Low |

| Deployment | API + Local | Local Only |

| Cost Efficiency | High | Medium |

| Scalability | Excellent | Restricted |

Pros & Cons

DeepSeek V3.1

Pros

- Superior efficiency via MoE

- Advanced reasoning capabilities

- Strong coding performance

- Reduced inference cost

- Agent-ready infrastructure

Cons

- API costs can increase at scale

- Complex architecture for beginners

Llama 1

Pros

- Fully open-weight model

- Suitable for offline deployment

- Research-friendly

- No API dependency

Cons

- Outdated performance metrics

- High GPU requirements

- Limited coding capability

- Lack of native tool integration

How to Use These AI Models

Using DeepSeek V3.1

- Access through API platforms

- Select an appropriate model variant

- Integrate into the backend or applications

- Utilize prompts or agent frameworks

Using Llama 1

- Download model weights

- Configure GPU environment

- Use frameworks like PyTorch

- Fine-tune for specific use cases

Tips to Choose the Right AI Model

- Prioritize use-case alignment over hype

- Evaluate the total cost of ownership (TCO)

- Analyze latency and throughput

- Test models using real-world prompts

- Avoid relying solely on outdated benchmarks

Europe-Specific Insights

AI adoption in Europe is shaped by regulatory and infrastructural considerations, such as:

- GDPR compliance

- Data sovereignty requirements

- Infrastructure cost constraints

DeepSeek is ideal for cloud-native organizations, whereas Llama 1 remains relevant for on-premise deployments in regulated sectors.

FAQs

A: Yes, in almost all modern benchmarks, DeepSeek V3.1 outperforms Llama 1 in reasoning, coding, and efficiency.

A: Llama 1 is cheaper upfront (no API), but infrastructure costs can make it expensive long-term.

A: Yes, especially for offline AI, research, and lightweight deployments.

A: DeepSeek V3.1 is significantly better for coding and development workflows.

A: Startups should prefer DeepSeek due to lower setup complexity and faster deployment.

Conclusion

When conducting a comprehensive evaluation of DeepSeek V3.1 vs Llama 1 Series, the conclusion in 2026 is both clear and evidence-driven:

DeepSeek V3.1 emerges as the superior model for modern AI applications.

Its Mixture-of-Experts architecture, enhanced reasoning Capabilities, and optimized cost-performance ratio make it the preferred choice for developers, startups, and enterprises seeking scalable AI solutions.

However, Llama 1 continues to hold niche value in offline deployments, academic experimentation, and budget-constrained environments.