Introduction

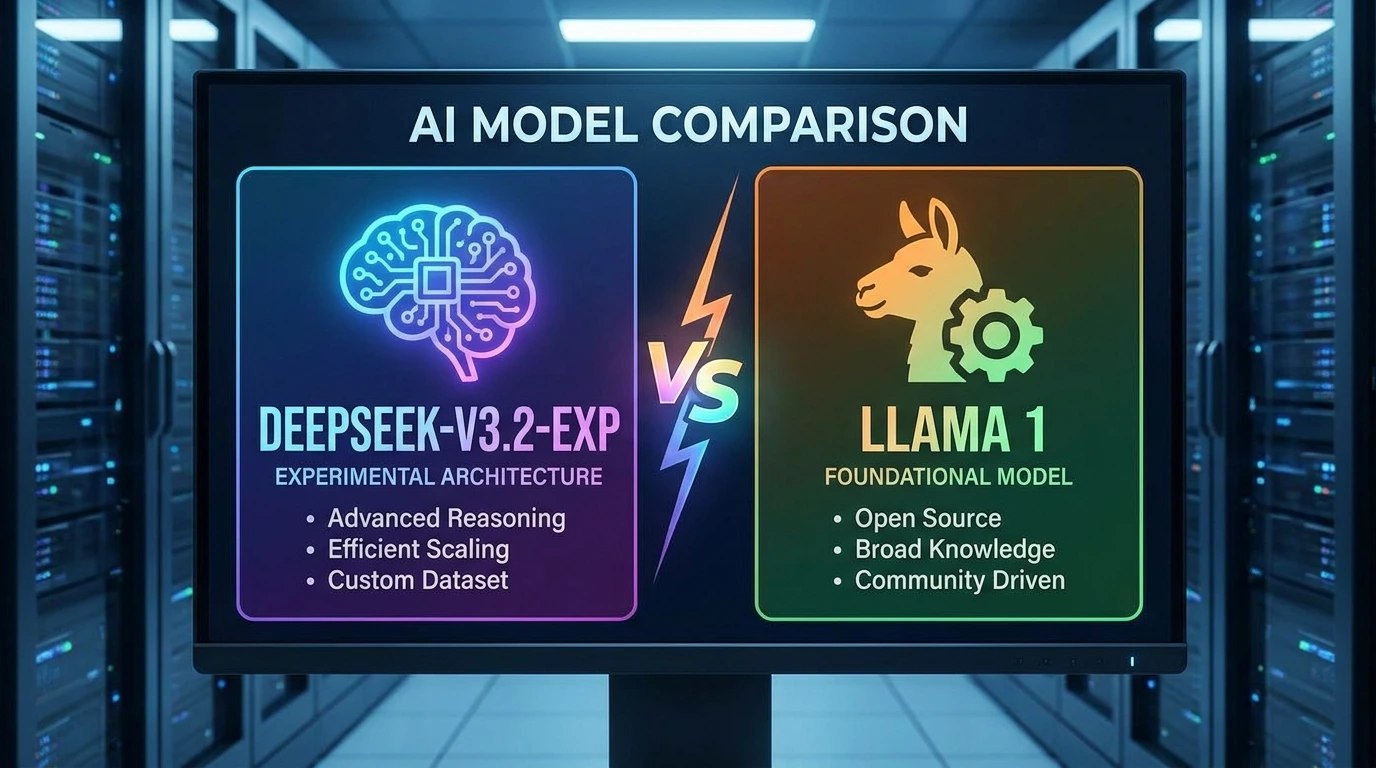

The comparison between DeepSeek V3.2 Exp and Llama 1 is not just a simple evaluation of two language models—it represents a timeline of natural language processing (NLP) evolution, architectural scaling, and efficiency optimization in large language models (LLMs).

In the landscape of 2026, artificial intelligence models are no longer judged solely by their ability to generate coherent sentences. Instead, evaluation has shifted toward:

- Semantic understanding depth

- Token-level efficiency

- Contextual memory retention

- Computational cost per inference

- Real-world task generalization

On one side, Llama 1 represents an early-stage transformer ecosystem that helped democratize access to large-scale NLP models. It is a foundational architecture that influenced later open-source LLM development.

On the other side, DeepSeek V3.2 Exp represents a modern optimization-driven paradigm using sparse activation, mixture-of-experts routing, and extended context windows designed for enterprise-level intelligence systems.

This article provides a deep NLP-centric breakdown of both models, covering architecture, embedding behavior, reasoning capacity, cost efficiency, and real-world deployment scenarios.

DeepSeek V3.2 Exp: Modern Sparse Intelligence System

DeepSeek V3.2 Exp is designed around efficiency-aware neural computation and large-scale contextual reasoning.

Core Characteristics:

- Advanced token routing via Mixture-of-Experts (MoE)

- Sparse activation mechanism for computational optimization

- Extended context memory handling (long document coherence)

- High-level semantic abstraction across multi-step reasoning chains

Key Technical Profile:

- Release Era: 2025 generation model

- Architecture Type: Sparse Transformer (MoE-based system)

- Context Processing: Very large-scale (long document retention)

- Optimization Focus: Latency reduction + inference efficiency

Interpretation:

Instead of activating the entire neural network for every token, DeepSeek selectively activates specialized sub-networks, improving both semantic precision and computational efficiency.

Llama 1: Foundational Dense Transformer Model

Llama 1 represents an earlier generation of transformer-based NLP systems developed with simplicity and accessibility in mind.

Core Characteristics:

- Dense attention across all parameters

- Full token interaction across layers

- Fixed context limitations

- Strong baseline language modeling ability

Key Technical Profile:

- Release Era: 2023 generation model

- Architecture Type: Dense Transformer

- Model Sizes: Multi-scale parameter variants (7B to 65B)

- Context Handling: Limited token window

Interpretation:

Every input token interacts with the full neural network, resulting in high computational cost but stable linguistic output.

Deep Architecture Comparison

Understanding transformer architecture is essential in evaluating DeepSeek V3.2 Exp vs Llama 1 from an NLP engineering perspective.

DeepSeek V3.2 Exp Architecture

DeepSeek V3.2 Exp uses a Mixture-of-Experts (MoE) architecture.

Internal Structure:

- Billions of total parameters (distributed system)

- Only a subset of parameters is activated per token

- Sparse Attention mechanism (DSA-style optimization)

- Dynamic expert routing based on semantic input classification

Behavior:

When a prompt is processed:

- Input tokens are embedded into a vector space

- Router network determines semantic category

- Only relevant expert subnetworks activate

- Output is generated through optimized aggregation

Key Advantages:

- Reduces computational redundancy

- Improves semantic specialization

- Handles long-context dependencies efficiently

- Enhances multi-step reasoning accuracy

Conceptually:

Instead of “thinking with the whole brain,” it uses task-specific neural regions dynamically.

Llama 1 Architecture

Llama 1 follows a traditional dense transformer design.

Internal Structure:

- Every parameter is activated for every token

- Standard multi-head attention layers

- Quadratic complexity in attention computation

- Uniform processing across all inputs

Behavior:

- Token embedding generation

- Full attention matrix computation

- All layers process the entire input simultaneously

- Final probabilistic token prediction

Key Advantages:

- Predictable output distribution

- Easier fine-tuning process

- Strong general-purpose language modeling

- Stable training behavior

Conceptually:

It behaves like a single unified neural processor handling every task equally.

Architectural Summary

| Feature | DeepSeek V3.2 Exp | Llama 1 |

| Attention Type | Sparse Attention | Dense Attention |

| Parameter Usage | Partial activation | Full activation |

| Efficiency | High | Moderate |

| Scalability | Very high | Limited |

| NLP Specialization | Expert-based routing | General-purpose |

Performance Analysis

Performance in NLP is not just accuracy—it includes reasoning depth, token efficiency, and contextual coherence.

DeepSeek V3.2 Exp Performance

DeepSeek demonstrates strong capabilities in:

- Multi-hop reasoning chains

- Code synthesis tasks

- Structured document understanding

- Scientific question answering

Semantic Strength:

It maintains long-range dependencies effectively, allowing it to understand:

- Multi-paragraph narratives

- Technical documentation

- Dataset-level reasoning

Insight:

It performs well in latent semantic compression and expansion tasks, meaning it can summarize or expand ideas with minimal information loss.

Llama 1 Performance

Llama 1 performs well in foundational NLP tasks such as:

- Sentence completion

- Basic question answering

- Short-form summarization

- Lightweight conversational AI

Semantic Strength:

It excels in:

- Stable token prediction

- Predictable linguistic patterns

- Basic language modeling tasks

Insight:

However, it struggles with long-context semantic retention and multi-step reasoning chains.

Benchmark Interpretation

| Benchmark | NLP Meaning |

| MMLU | Multi-domain reasoning ability |

| GPQA | Scientific and logical reasoning |

| HumanEval | Code generation intelligence |

Conclusion:

DeepSeek V3.2 Exp demonstrates significantly stronger semantic generalization and reasoning depth compared to Llama 1.

Context Window & Memory Behavior

Context window size is critical in NLP systems because it defines how much semantic information a model can retain.

DeepSeek V3.2 Exp Context Capability

- Extremely large token window (long-form reasoning)

- Supports extended document ingestion

- Maintains coherence across large textual structures

Impact:

- Enables document-level understanding

- Supports multi-document summarization

- Useful for knowledge retrieval systems (RAG pipelines)

Llama 1 Context Capability

- Limited token window (~short context range)

- Struggles with extended document processing

- Loses semantic coherence in long sequences

Impact:

- Suitable only for short conversational tasks

- Cannot maintain global document semantics

- Weak in long-range dependency modeling

Key Insight

DeepSeek functions as a long-context semantic processor, while Llama 1 is a short-context language predictor.

Cost Efficiency

DeepSeek V3.2 Exp Cost Model

- API-based pricing

- Optimized via sparse activation

- Reduced computation per token

Cost Insight:

Even though it is advanced, it is optimized for:

- Large-scale API usage

- Enterprise NLP pipelines

- High-volume inference systems

Llama 1 Cost Model

- Open-source availability

- No API dependency

- Requires local hardware resources

Cost Insight:

- Free model access

- The infrastructure cost depends on the deployment

- High cost at scale due to inefficient computation

Cost Summary

| Use Case | Best Model |

| Small NLP experiments | Llama 1 |

| Enterprise NLP systems | DeepSeek V3.2 Exp |

| High-scale APIs | DeepSeek V3.2 Exp |

| Offline NLP research | Llama 1 |

Real-World NLP Use Cases

DeepSeek V3.2 Exp Applications

- AI agents with reasoning capabilities

- Enterprise document understanding systems

- Long-context summarization engines

- Code generation assistants

Llama 1 Applications

- Educational NLP systems

- Research experimentation

- Lightweight chatbots

- Fine-tuning experiments

Regional Adoption Trends

Different regions adopt models based on privacy, cost, and deployment needs.

- Germany → local systems preferred

- UK → API-based AI integration

- France → hybrid architectures

- Switzerland → privacy-first AI models

FAQs

A: Yes. In NLP reasoning, long-context understanding, and semantic generalization, DeepSeek V3.2 Exp significantly outperforms Llama 1.

A: Not fully. Llama 1 is mainly used as a foundational NLP model for learning and experimentation rather than production-grade intelligence systems.

A: Llama 1 is free in terms of licensing, but DeepSeek becomes more cost-efficient at scale due to optimized inference architecture.

A: Yes. It is widely used in NLP-powered startups for automation, chatbots, and enterprise intelligence systems.

A: Llama 1, because it can be deployed entirely offline without an external API dependency.

DeepSeek V3.2 Exp vs Llama 1

From a 2026 NLP engineering perspective:

DeepSeek V3.2 Exp

Best for:

- Large-scale semantic reasoning

- Enterprise NLP systems

- AI automation pipelines

- Long-context document intelligence

Llama 1

Best for:

- NLP education and learning

- Offline experimentation

- Lightweight language modeling

- Research prototyping

CONCLUSION

The comparison between DeepSeek V3.2 Exp and Llama 1 clearly highlights the evolution of NLP systems from early-stage transformer models to Advanced sparse-expert architectures.

Llama 1 remains an important milestone in NLP history. It introduced accessible large language modeling and enabled widespread experimentation in natural language processing. However, its limitations in context length, reasoning depth, and computational efficiency make it less suitable for modern AI workloads.

In contrast, DeepSeek V3.2 Exp represents the next generation of NLP intelligence systems. With its mixture-of-experts architecture, sparse activation, and extended context handling, it is designed for real-world applications where scalability, reasoning accuracy, and efficiency are critical.