Introduction

The artificial intelligence ecosystem in 2026 has matured beyond simplistic Comparisons such as “bigger model equals better performance.” Instead, the paradigm has shifted toward computational efficiency, inference latency, token economics, contextual reasoning depth, and real-world deployment scalability.

Modern AI systems are now evaluated through multidimensional metrics such as:

- Token efficiency per query

- Latency per inference cycle

- Semantic coherence in generative outputs

- Context window utilization efficiency

- API cost per 1M tokens

- Reasoning depth vs computational overhead

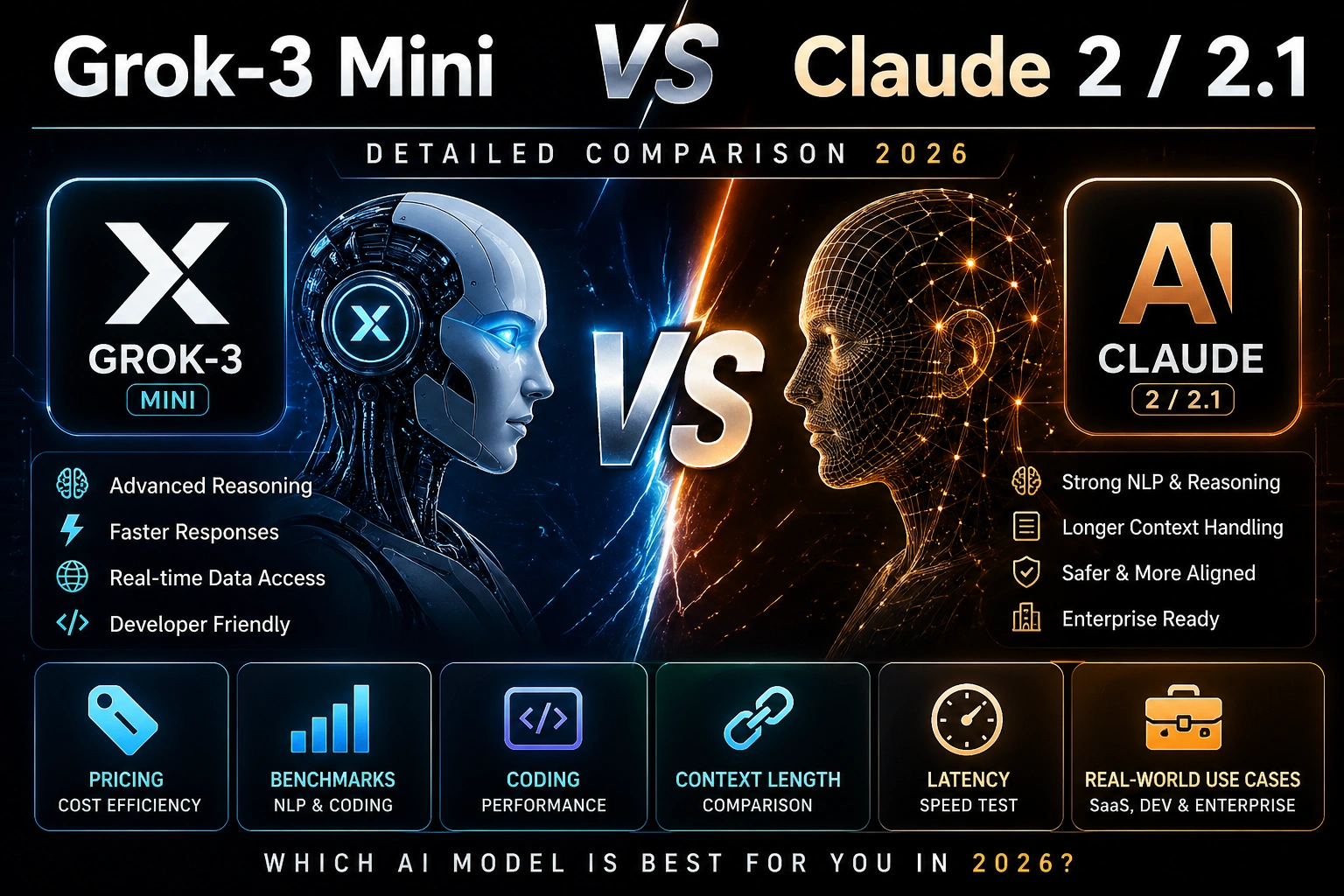

Within this advanced evaluation framework, two models stand out in different performance tiers:

- Grok-3 Mini → A lightweight, high-efficiency transformer optimized for scalable production workloads

- Claude 2 / Claude 2.1 → A large-context, reasoning-heavy generative model designed for structured intelligence and long-form NLP tasks

This article provides a deep semantic, technical, and applied NLP-driven comparison for developers, AI engineers, SaaS founders, and enterprise decision-makers across global markets, including Europe and North America.

We will analyze:

- Architecture-level behavioral differences

- NLP performance benchmarks

- Tokenization efficiency and inference cost

- Context window utilization

- Coding intelligence and reasoning stability

- Real-world SaaS deployment scenarios

- Cost-performance optimization strategies

Let’s begin with a foundational understanding.

What is Grok-3 Mini?

Grok-3 Mini is a compact transformer-based large language model (LLM) engineered for high-throughput inference, low-latency response generation, and cost-optimized API scaling.

Unlike traditional large-scale models that prioritize parameter magnitude, Grok-3 Mini focuses on:

- Efficient token compression

- Reduced computational graph complexity

- Optimized attention mechanisms

- Fast forward-pass inference cycles

Core Functional Objective

Grok-3 Mini is primarily designed for:

- Real-time conversational AI systems

- High-frequency API workloads

- Embedded SaaS automation pipelines

- Developer tooling and copilots

- Lightweight NLP inference engines

Strength Profile

From a natural language processing perspective, Grok-3 Mini demonstrates:

- High token throughput efficiency

- Strong contextual embedding alignment

- Fast semantic vector interpretation

- Reduced hallucination under constrained prompts

It is optimized for “semantic sufficiency” rather than exhaustive generative elaboration.

Key Strengths

- Ultra-low inference latency (optimized transformer pruning)

- High tokens-per-second throughput

- Cost-efficient API consumption model

- Strong performance in structured NLP tasks

- Effective for real-time chat and automation pipelines

Design Philosophy of “Mini.”

The term “Mini” is misleading in traditional AI perception. It does NOT imply reduced intelligence; instead, it indicates:

A compressed, compute-efficient transformer optimized for maximum intelligence-per-flop ratio.

This design philosophy is crucial in modern AI engineering, where:

- GPU cost reduction

- serverless scaling

- distributed inference systems

are dominant constraints.

What is Claude 2 / Claude 2.1?

Claude 2 and Claude 2.1 belong to a class of large-context transformer architectures optimized for deep semantic reasoning and extended document comprehension.

They are designed for:

- Long-form content generation

- Multi-document summarization

- Legal and academic reasoning

- Structured conversational intelligence

- High-safety aligned NLP outputs

Architecture Strengths

Claude models excel in:

- Deep contextual attention spanning long token sequences

- Stable syntactic coherence across paragraphs

- Reduced semantic drift in extended outputs

- Strong reinforcement learning from human feedback (RLHF alignment)

Context Window Advantage

One of Claude’s defining features is its extremely large context window, enabling:

- Multi-document ingestion

- Long research paper analysis

- Codebase-level comprehension

- Extended dialogue memory retention

Limitations in the 2026 AI Ecosystem

Despite strengths, Claude 2/2.1 faces constraints:

- Higher inference latency

- Increased token cost per request

- Less optimized for high-frequency API workloads

- Computational inefficiency in real-time applications

Grok-3 Mini vs Claude 2 / 2.1: Based Head-to-Head Analysis

Performance & Latency Metrics

| Feature | Grok-3 Mini | Claude 2 / 2.1 |

| Inference Speed | ⚡ Ultra-fast | Moderate |

| Latency | Very low | Higher |

| NLP Responsiveness | High | High but slower |

| Throughput | Optimized | Heavy |

Interpretation

Grok-3 Mini is engineered for low-latency semantic decoding, while Claude prioritizes deep contextual reasoning chains.

Cost Efficiency & Token Economics

AI pricing is now heavily influenced by tokenization efficiency and compute scaling.

Claude 2 / 2.1

- High token cost per inference

- Expensive for large-scale deployment

- Inefficient for real-time SaaS APIs

Grok-3 Mini

- Low-cost token processing

- Optimized API economics

- Designed for large-scale concurrency

Key Insight

Grok-3 Mini delivers a superior cost-per-intelligence ratio (CPIR).

Benchmark Interpretation

| Benchmark | Meaning | Real NLP Interpretation |

| MMLU | Knowledge reasoning | Conceptual generalization |

| MATH | Logical reasoning | Symbolic inference capability |

| HumanEval | Code generation | Program synthesis ability |

Semantic Insight

- Grok-3 Mini → optimized efficient reasoning pathways

- Claude 2 → optimized deep hierarchical reasoning structures

Context Window & Memory Architecture

| Model | Context Length | NLP Impact |

| Claude 2 | ~100K tokens | Strong long-document reasoning |

| Claude 2.1 | ~200K tokens | Extended contextual memory |

| Grok-3 Mini | Smaller optimized window | Fast contextual refresh cycles |

Interpretation

Claude dominates in long-range dependency modeling, while Grok excels in short-to-mid context dynamic reasoning loops.

Speed vs Semantic Depth Tradeoff

- Grok-3 Mini → optimized for real-time semantic inference

- Claude → optimized for deep linguistic construction

This creates a classical NLP tradeoff:

Speed (Grok) vs Depth (Claude)

Coding Intelligence

Grok-3 Mini

- Fast code synthesis

- Efficient debugging loops

- Strong iterative refinement

Claude 2

- More structured code explanations

- Better documentation generation

- Higher semantic clarity in complex logic

Verdict

- Developers → prefer Grok for iteration speed

- Educators → prefer Claude for explanation clarity

Use Case Optimization Matrix

| Use Case | Best Model |

| SaaS API Systems | Grok-3 Mini |

| Real-time Chatbots | Grok-3 Mini |

| Research Writing | Claude 2.1 |

| Legal Document Analysis | Claude 2.1 |

| High-volume automation | Grok-3 Mini |

| Academic summarization | Claude 2.1 |

Insight: Efficiency vs Depth Paradigm

At the architectural level, both models represent distinct NLP philosophies:

Grok-3 Mini

- Compression-centric transformer

- High-efficiency token decoding

- Real-time adaptive inference

Claude 2 / 2.1

- Expansion-centric transformer

- Deep semantic layering

- Context-heavy reasoning pipeline

🇪🇺 Europe AI Market Perspective (2026)

European AI adoption is influenced by:

- GDPR compliance constraints

- Energy-efficient compute demand

- SaaS scalability requirements

- Multilingual NLP processing (EN/FR/DE/ES/IT)

Why Grok-3 Mini is growing in Europe

- Lower operational cost

- Faster API response times

- Better scalability for startups

- Efficient multilingual token handling

Why Claude remains relevant

- Strong enterprise adoption

- Superior legal and academic NLP performance

- Trusted long-document reasoning

Pricing & Value Optimization Table

| Category | Grok-3 Mini | Claude 2 / 2.1 |

| Cost Efficiency | ⭐ Very High | Medium |

| Latency | Very Low | Medium-High |

| Scalability | Excellent | Moderate |

| Enterprise Use | Strong | Strong |

| Startup Suitability | ⭐ Ideal | Limited |

Pros & Cons

Grok-3 Mini Advantages

- High throughput token processing

- Low-latency semantic inference

- Cost-efficient scaling architecture

- Strong API concurrency handling

Limitations

- Limited extended context memory

- Reduced long-form elaboration depth

Claude 2 / 2.1 Advantages

- Superior long-context reasoning

- High-quality generative coherence

- Strong document-level comprehension

Limitations

- Higher computational cost

- Slower inference cycles

- Less optimized for real-time APIs

How to Choose the Right Model

Choose Grok-3 Mini if:

- You build SaaS platforms

- You need real-time AI responses

- You prioritize cost optimization

- You handle high API traffic

Choose Claude 2 / 2.1 if:

- You process long documents

- You need academic-level writing

- You prioritize reasoning depth

Hybrid NLP Strategy

Advanced teams often use dual-model pipelines:

- Grok-3 Mini → real-time inference layer

- Claude 2 → content generation & refinement layer

This hybrid architecture improves:

- latency optimization

- cost efficiency

- output quality balance

Optimization Tips for Better AI Output

- Use structured prompts with explicit intent framing

- Apply role-based conditioning

- Reduce ambiguity in input tokens

- Segment tasks into modular inference steps

- Use deterministic output formatting (JSON, schema-based prompts)

FAQs

A: It depends on the use case. Grok-3 Mini is superior in latency, cost efficiency, and scalability, while Claude 2 excels in deep reasoning and long-form NLP generation.

A: Grok-3 Mini is significantly more cost-efficient due to optimized token processing and reduced compute overhead.

A: It remains highly relevant for academic writing, legal analysis, and structured long-form reasoning tasks.

A: Grok-3 Mini → faster iterative coding

Claude 2 → better explanations and structured logic

A: Most startups prefer Grok-3 Mini due to scalability, low cost, and real-time performance efficiency.

Conclusion

The comparison between Grok-3 Mini vs Claude 2 / 2.1 represents a broader transformation in NLP model design philosophy.

We are witnessing a shift from:

- Large monolithic reasoning systems

- Efficient, distributed, inference-optimized architectures

Final Insight:

- Grok-3 Mini = Efficiency-First NLP System

- Claude 2 = Depth-First Semantic Reasoning System

The optimal strategy in 2026 is not exclusivity, but architectural combination and hybrid deployment strategies that leverage both speed and intelligence simultaneously.