Introduction

Artificial Intelligence in 2026 is no longer a simple Computational assistant technology. It has evolved into a multi-layered cognitive ecosystem where models are designed not just for answering queries, but for:

- Semantic reasoning

- Natural language understanding

- Natural language generation

- Contextual memory retention

- Real-time adaptive inference

- Domain-specific intelligence processing

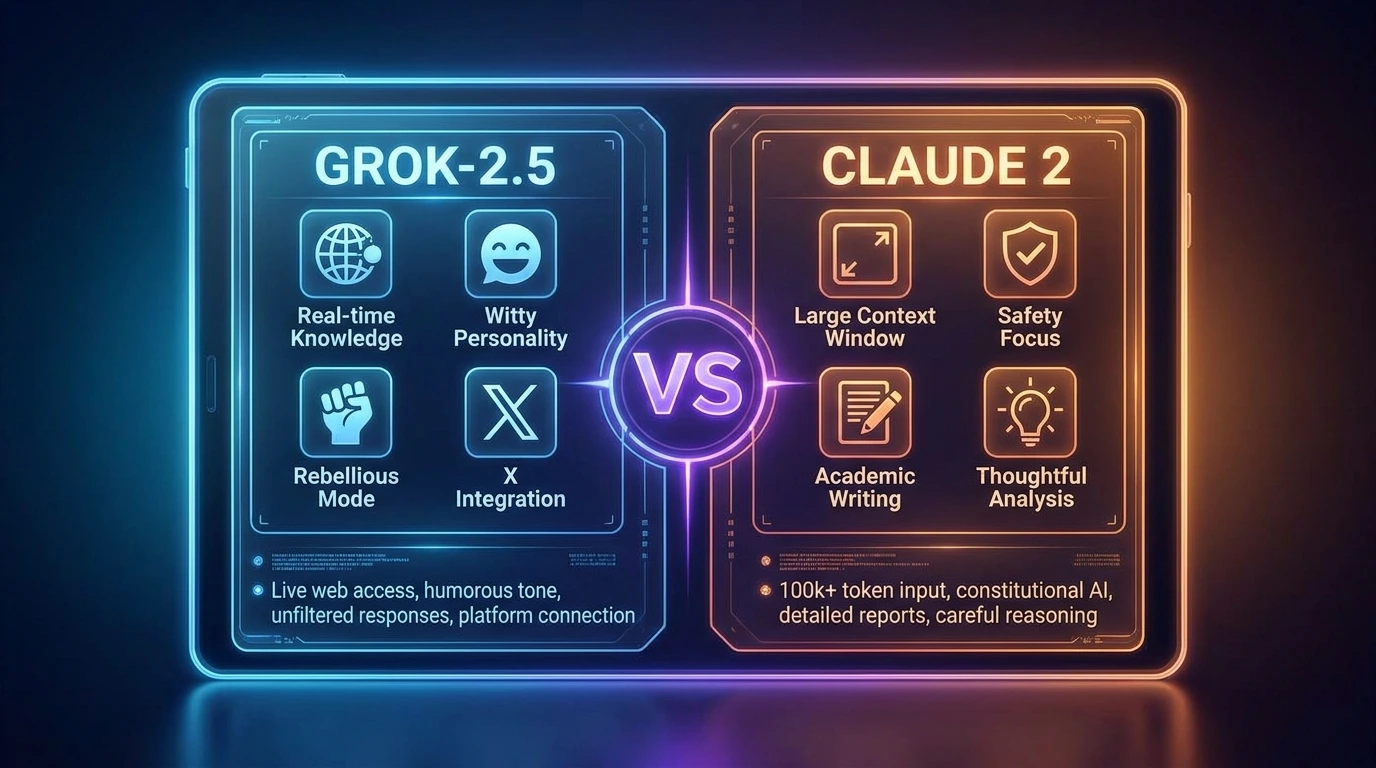

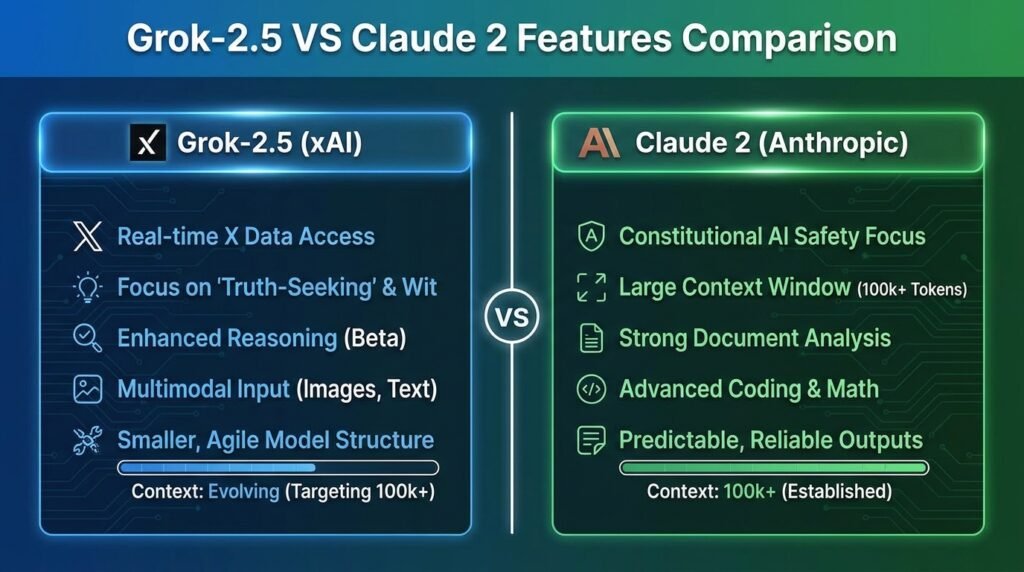

Within this evolving AI ecosystem, two advanced systems stand out for their contrasting design philosophies:

- Grok-2.5 → Real-time adaptive intelligence engine

- Claude 2 → Structured reasoning and safety-first analytical AI

These systems are not merely tools; they represent two different paradigms of machine cognition:

- One prioritizes speed, adaptability, and live data interpretation

- The other prioritizes accuracy, structured reasoning, and alignment with safety

This article provides a deep NLP-based comparative analysis of both systems, focusing on architecture, semantic intelligence, contextual processing, real-world applications, and enterprise adoption.

Core Philosophy Behind Both Models

Grok-2.5: Dynamic Semantic Adaptation Model

From a natural language processing perspective, Grok-2.5 is optimized for:

- Real-time token interpretation

- Adaptive contextual embedding

- High-speed semantic parsing

- Streaming data comprehension

Key Characteristics:

- Context window dynamically optimized

- Real-time lexical updating

- Fast attention mechanism execution

In simple NLP terms:

Grok-2.5 behaves like a live semantic interpreter of global information flow.

It continuously adapts its embeddings based on incoming contextual signals, making it highly effective in environments where language input changes rapidly.

Claude 2: Structured Semantic Reasoning Model

Claude 2, from an NLP perspective, is built around:

- Deep contextual embedding stability

- Hierarchical reasoning layers

- Controlled token generation

- Long-document semantic retention

Key NLP Characteristics:

- Stable transformer-based architecture

- Reinforced alignment constraints

- High coherence in long-form output

- Strong discourse-level understanding

In simple NLP terms:

Claude 2 functions as a deep reasoning language model optimized for structured comprehension and safe generation.

Architecture Comparison

Grok-2.5 Architecture

Grok-2.5 uses a Mixture-of-Experts (MoE) approach combined with real-time inference optimization.

Architecture Components:

- Sparse expert activation per token input

- Dynamic routing of semantic pathways

- Streaming embedding updates

- Low-latency decoding engine

Impact:

This architecture allows:

- Faster token generation

- Reduced computational overhead

- Adaptive contextual learning during inference

Essentially, Grok-2.5 behaves like a context-sensitive probabilistic language engine.

Claude 2 Architecture

Claude 2 is built on a constrained transformer-based system with enhanced alignment layers.

Architecture Components:

- Hierarchical attention layers

- Constitutional AI safety constraints

- Long-range dependency tracking

- Reinforced semantic alignment modules

Impact:

This leads to:

- Highly stable text generation

- Reduced hallucination probability

- Strong logical consistency across long outputs

Claude 2 behaves like a formal semantic reasoning engine with controlled generation pathways.

Feature Comparison

| Feature | Grok-2.5 | Claude 2 🧠 |

| Semantic Speed | Very High | Medium |

| Context Stability | Medium | Very High |

| Real-Time Language Adaptation | Yes | No |

| Long-Form Coherence | Medium | Very High |

| Safety Filtering | Moderate | Strong |

| Creative Language Flexibility | High | Controlled |

| Enterprise NLP Suitability | Medium | High |

Coding Intelligence Comparison

Grok-2.5 Coding Behavior

From a language model perspective, Grok-2.5 excels in:

- Rapid code token prediction

- Instant syntax correction

- Lightweight debugging suggestions

- Real-time API language mapping

Strength:

- Fast lexical generation of code snippets

- Context-aware correction at sentence-level granularity

Limitation:

- Limited deep structural reasoning across large codebases

Claude 2 Coding NLP Behavior

Claude 2 operates with:

- Deep semantic code understanding

- Multi-file contextual reasoning

- Strong documentation generation capability

- High-level abstraction modeling

Strength:

- Excellent long-form code explanation

- Strong logical consistency across modules

Limitation:

- Slightly slower token generation speed

Real-World Application Scenarios

Developers

- Grok-2.5 → Fast semantic debugging assistant

- Claude 2 → Architectural reasoning assistant

Content Creators

- Grok-2.5 → Trend-based language generation

- Claude 2 → Structured long-form article generation

Enterprise Systems

- Grok-2.5 → Real-time conversational interfaces

- Claude 2 → Compliance-driven document generation

Research Applications

Claude 2 dominates due to:

- Deep contextual embedding retention

- High discourse-level coherence

- Stable semantic reasoning chains

Feature Value Translation

| Feature | Functional Meaning | Real Impact |

| Token Speed | Response latency | User productivity |

| Context Window | Memory span | Research depth |

| Safety Layer | Output filtering | Enterprise trust |

| MoE Activation | Compute efficiency | Cost reduction |

| Semantic Depth | Reasoning accuracy | Decision quality |

Pros & Cons

Grok-2.5 Advantages

- High-speed lexical output generation

- Real-time semantic adaptation

- Strong contextual flexibility

- Excellent for dynamic conversational flows

Limitations:

- Lower long-context stability

- Reduced formal reasoning consistency

- Less structured discourse generation

Claude 2 Advantages

- Strong discourse coherence

- High semantic stability

- Excellent long-context retention

- Enterprise-grade linguistic safety

Limitations:

- Slower token generation speed

- Less spontaneous creativity in output

Future Evolution

The AI ecosystem is evolving toward multi-model NLP fusion systems, where different models specialize in different linguistic tasks.

Emerging Trend: Hybrid Intelligence Stack

Future AI pipelines will combine:

- Real-time NLP models (Grok-like systems)

- Structured reasoning NLP models (Claude-like systems)

- Multimodal embedding systems (text + vision + audio)

Future Architecture Insight:

Instead of relying on a single model, systems will:

- Route queries dynamically

- Use specialized NLP engines per task

- Merge outputs using Semantic fusion layers

Future Conclusion

The future is not a competition between Grok-2.5 and Claude 2.

It is a semantic orchestration layer combining multiple NLP intelligence engines.

Adoption in Europe

Across Europe, NLP adoption is influenced by:

- GDPR-compliant language processing systems

- Multilingual semantic modeling requirements

- Enterprise-level document security standards

Adoption Trends:

- Claude 2 → Strong adoption in legal, finance, enterprise sectors

- Grok-2.5 → Popular in media, startups, real-time analytics

Workflow Optimization Strategy

Best Semantic Workflow

Idea Generation

- Fast semantic brainstorming

- Trend-aware content generation

Structural Refinement

- Deep rewriting

- Logical structuring

- SEO optimization

Final Output Fusion

- Combine adaptive + structured intelligence

This creates a hybrid NLP pipeline for maximum output quality.

FAQs

Grok-2.5 is faster, but Claude 2 is more accurate for complex coding projects and documentation-heavy tasks.

Claude 2 is significantly better for deep research due to its long-context understanding and structured reasoning.

Yes, Grok-2.5 is designed for real-time awareness and live information processing.

Claude 2 is more suitable for enterprise environments due to its strong safety and alignment systems.

Yes, many professionals use Grok for ideation and Claude for refinement and final output structuring.

Conclusion

From an NLP engineering standpoint, Grok-2.5 and Claude 2 are not competitors in the traditional sense. They are complementary cognitive architectures.

- Grok-2.5 → Optimized for real-time semantic adaptability and speed

- Claude 2 → Optimized for structured reasoning, safety, and long-context coherence

Final Insight:

The most powerful AI systems in 2026 are not single models—they are integrated NLP ecosystems that combine multiple intelligence layers into unified workflows.