Introduction

Artificial intelligence is Advancing at an unprecedented pace. Every year introduces new architectures, larger parameter counts, extended context windows, and more refined alignment techniques. Yet despite the rapid evolution of large language models (LLMs), confusion still exists around one fundamental topic:

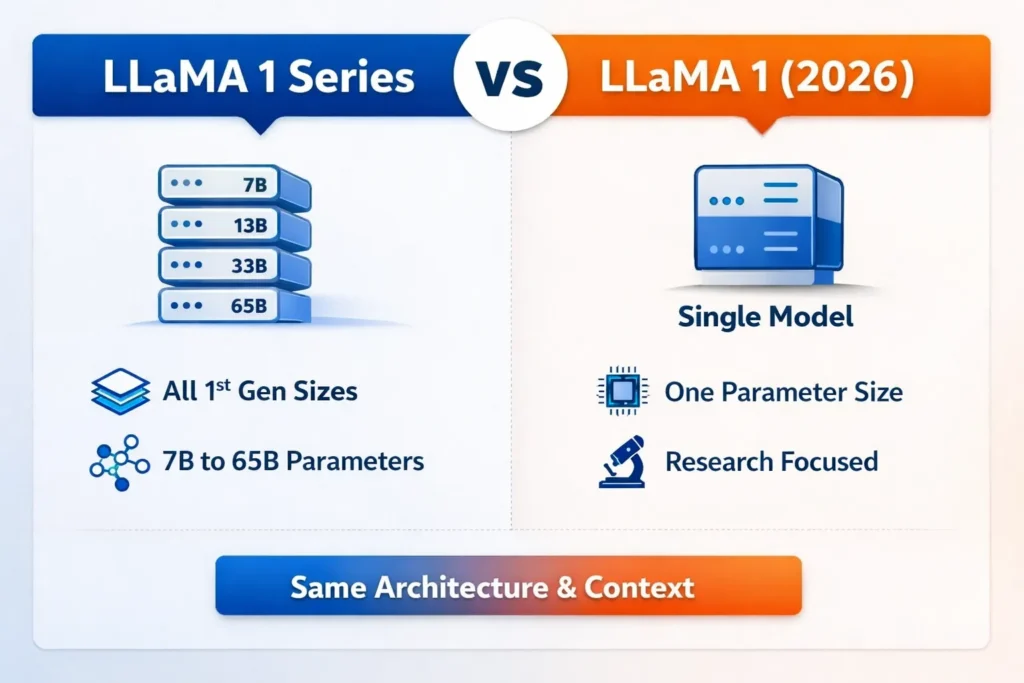

“LLaMA 1 Series vs LLaMA 1 — what is the real difference?”

Is LLaMA 1 a single standalone neural network?

Does “LLaMA 1 Series” represent a separate generation?

Are there structural, architectural, or training divergences?

Or is this simply terminology shaped by SEO rather than engineering reality?

In this comprehensive 2026 guide, we will dissect the distinction with clarity, technical depth, and modern NLP terminology — eliminating ambiguity once and for all.

By the end of this article, you will understand:

- What LLaMA 1 truly represents in the NLP ecosystem

- What the phrase “LLaMA 1 Series” actually means

- Model architecture and transformer innovations

- Parameter scaling differences and computational implications

- Tokenization and training corpus strategy

- Licensing constraints and deployment limitations

- Benchmark evaluations and reasoning performance

- Context window boundaries and memory capacity

- Practical real-world use cases

- Which variant to choose in 2026

Let’s begin at the foundation.

Understanding the LLaMA Model Family

To evaluate LLaMA 1 properly, we must first understand its origin.

The LLaMA (Large Language Model Meta AI) family was developed and released in early 2023 by Meta AI, the advanced artificial intelligence research arm of Meta Platforms.

At that time, the AI industry was dominated by closed, proprietary systems such as:

- GPT-3

- ChatGPT

- Google Bard

These models were powerful but not openly accessible in terms of weights.

Meta pursued a radically different strategy:

- Release open-weight models

- Optimize training efficiency

- Emphasize high-quality data curation

- Democratize research-level LLM experimentation

The result was transformative. LLaMA 1 reshaped the open-weight AI landscape, proving that model efficiency and data quality could rival brute-force scaling.

What Is LLaMA 1?

LLaMA 1 is the first-generation foundational language model from Meta. It is a decoder-only transformer-based autoregressive neural network trained to predict the next token in a sequence.

It was not:

- A conversational chatbot

- Instruction-tuned by default

- Reinforcement Learning from Human Feedback (RLHF) aligned

- Designed for plug-and-play commercial deployment

Instead, it was a base pre-trained model intended for:

- Academic research

- Architecture experimentation

- Fine-tuning studies

- Efficiency benchmarking

- Transfer learning

In NLP terms, LLaMA 1 is a causal language modeling system optimized through large-scale self-supervised pretraining on diverse corpora.

Available Parameter Sizes

Here is where terminology confusion emerges.

LLaMA 1 was released in four distinct parameter configurations:

| Model Variant | Parameters |

| LLaMA 1 7B | 7 Billion |

| LLaMA 1 13B | 13 Billion |

| LLaMA 1 33B | 33 Billion |

| LLaMA 1 65B | 65 Billion |

These are not separate generations. They share:

- Identical architectural design

- Identical training methodology

- Identical tokenization strategy

- Identical maximum context window

- Identical licensing restrictions

The only difference is model scale.

When blogs refer to “LLaMA 1 Series,” they are simply referring collectively to these four parameter variants.

Thus:

LLaMA 1 = The first-generation architecture

LLaMA 1 Series = All four parameter sizes of that architecture

There is no structural divergence.

This is the most important clarification in the LLaMA 1 Series vs LLaMA 1 discussion.

Architectural Deep Dive

Even though the variants differ only in size, the architecture itself deserves detailed examination.

Transformer Backbone

LLaMA 1 uses a decoder-only transformer architecture, inspired by the original Transformer introduced by Google Research in 2017.

Core components include:

- Multi-head self-attention

- Feed-forward neural layers

- Residual connections

- Normalization layers

This architecture allows the model to:

- Capture long-range dependencies

- Model semantic relationships

- Encode syntactic structures

- Generate coherent text sequences

The transformer design is the foundation of modern LLMs.

RMSNorm Instead of LayerNorm

LLaMA 1 replaces traditional Layer Normalization with RMSNorm (Root Mean Square Normalization).

Why this matters:

- Reduces computational overhead

- Improves training stability

- Enhances gradient flow

- Supports better scaling behavior

From an optimization perspective, RMSNorm simplifies normalization while maintaining convergence Reliability.

SwiGLU Activation Function

Instead of ReLU or GELU, LLaMA 1 uses SwiGLU (Swish-Gated Linear Units).

This improves:

- Nonlinear representation capacity

- Parameter efficiency

- Mathematical reasoning performance

- Code synthesis ability

SwiGLU increases expressive capacity without proportionally increasing computational burden.

Rotary Positional Embeddings (RoPE)

Positional encoding is essential in transformers.

LLaMA 1 implements Rotary Positional Embeddings (RoPE), which:

- Encode token position through rotation matrices

- Improve extrapolation

- Enhance structural coherence

- Support better attention decay patterns

However, the maximum context window remained:

2048 tokens

By 2026 standards, this is modest compared to modern 128K–200K context models.

Training Data & Corpus Strategy

LLaMA 1 was trained on approximately 1.4 trillion tokens of curated public data.

Data sources included:

- Web text corpora

- Wikipedia

- Academic publications

- Books

- GitHub repositories

- Code datasets

Meta emphasized quality-controlled filtering instead of pure scale expansion.

This dataset optimization strategy allowed smaller models (e.g., 13B) to rival significantly larger systems in benchmarks.

In NLP terms, LLaMA 1 Benefited from:

- High signal-to-noise ratio data

- Deduplication filtering

- Language diversity balancing

- Structured sampling

Benchmark Performance Analysis

Upon release, LLaMA 1 produced remarkable results.

The 13B model rivaled much larger proprietary systems across:

- Commonsense reasoning tasks

- MMLU benchmarks

- Code evaluation sets

- Mathematical reasoning tests

The 65B variant delivered:

- Strong logical inference

- Multi-step reasoning

- Improved abstraction capability

However, limitations included:

- No instruction fine-tuning

- No safety alignment

- No conversational RLHF layer

It was a base foundation model, not a polished conversational assistant.

Licensing Constraints

One of the most critical aspects in the LLaMA 1 Series vs LLaMA 1 discussion is licensing.

LLaMA 1 operated under a research-only license.

Implications

- Required application approval

- No automatic commercial Redistribution

- Restricted enterprise SaaS deployment

- Limited startup commercialization

This constraint significantly reduced direct production adoption.

Later generations resolved this issue.

Real-World Use Cases in 2026

Even in 2026, LLaMA 1 retains relevance.

Ideal For:

- Academic research

- Transformer architecture analysis

- Parameter scaling experiments

- Transfer learning research

- Benchmark replication

- Efficiency testing

- GPU optimization studies

Not Suitable For:

- Enterprise chatbots

- Long-context document analysis

- Multimodal AI systems

- Consumer SaaS products

- High-safety deployments

Parameter Scaling Comparison

| Use Case | 7B | 13B | 33B | 65B |

| Research | ✅ | ✅ | ✅ | ✅ |

| Small GPU Setup | ✅ | ⚠ | ❌ | ❌ |

| Advanced Reasoning | ⚠ | ✅ | ✅ | ✅ |

| Cost Efficiency | High | Medium | Low | Very Low |

| Deployment Ease | Easy | Moderate | Hard | Very Hard |

Scaling effects include:

- Increased memory requirements

- Higher VRAM demand

- Greater inference latency

- Improved representational depth

- Enhanced abstraction ability

Pros and Cons

Advantages

- Efficient architecture

- Strong parameter-to-performance ratio

- Open-weight accessibility (research)

- Multiple scaling options

- High-quality pretraining data

Disadvantages

- Research-only license

- 2048 token context limit

- No instruction alignment

- Limited safety guardrails

- Obsolete compared to modern 2026 systems

LLaMA 1 Series Strengths & Weaknesses

Strengths

- Flexible scaling

- Hardware adaptability

- Ideal for experimentation

- Efficient design principles

Weaknesses

- No architectural differentiation between sizes

- Limited context window

- No multimodal capacity

- Not production-ready

Which Should You Choose?

When users ask:

“LLaMA 1 Series vs LLaMA 1 – which is better?”

They are essentially selecting a Parameter scale.

Choose 7B or 13B if:

- You have limited GPU memory

- You are studying transformer mechanics

- You require cost-effective experimentation

Choose 33B or 65B if:

- You have high-performance GPUs

- You are benchmarking reasoning depth

- You need a stronger abstraction capability

However, for 2026 commercial deployment, newer generations such as:

- LLaMA 2

- LLaMA 3

are significantly more practical.

How LLaMA 1 Influenced Future Generations

LLaMA 1 proved a groundbreaking principle:

Model efficiency + curated data > brute-force parameter expansion

Its success triggered:

- Broader open-weight adoption

- Community fine-tuning ecosystems

- Instruction-tuned derivatives

- Efficient transformer research

Later generations improved:

- Licensing flexibility

- Context window expansion

- Safety alignment

- Instruction tuning

- Conversational capability

- Multimodal integration

LLaMA 1 remains historically significant as a turning point in open AI development.

FAQs

A: Llama 1 Series” refers to all parameter sizes (7B, 13B, 33B, 65B) of LLaMA 1.

A: Originally, it was restricted to research use only.

A: The standard context window is 2048 tokens.

A: For research and historical benchmarking. Not ideal for production AI systems.

A: The 65B variant delivers the strongest reasoning but requires significant hardware.

Conclusion

The debate around LLaMA 1 Series vs LLaMA 1 is largely semantic rather than technical.

There is no architectural discrepancy.

No generational divergence.

No training variation.

The “Series” label simply aggregates the 7B, 13B, 33B, and 65B parameter scales under one umbrella.

LLaMA 1 remains a landmark innovation in NLP history — a model that demonstrated how efficiency, optimization, and data curation could compete with massive Proprietary systems.

In 2026, its primary value lies in:

- Educational exploration

- Research benchmarking

- Transformer experimentation

- Historical comparison

For production AI systems, newer models are superior.

But for understanding how modern large language models evolved, LLaMA 1 remains foundational — a milestone that reshaped open-weight AI forever.