Ray-Ban Meta AI Glasses (2026) — The Smart Glasses Everyone Is Talking About

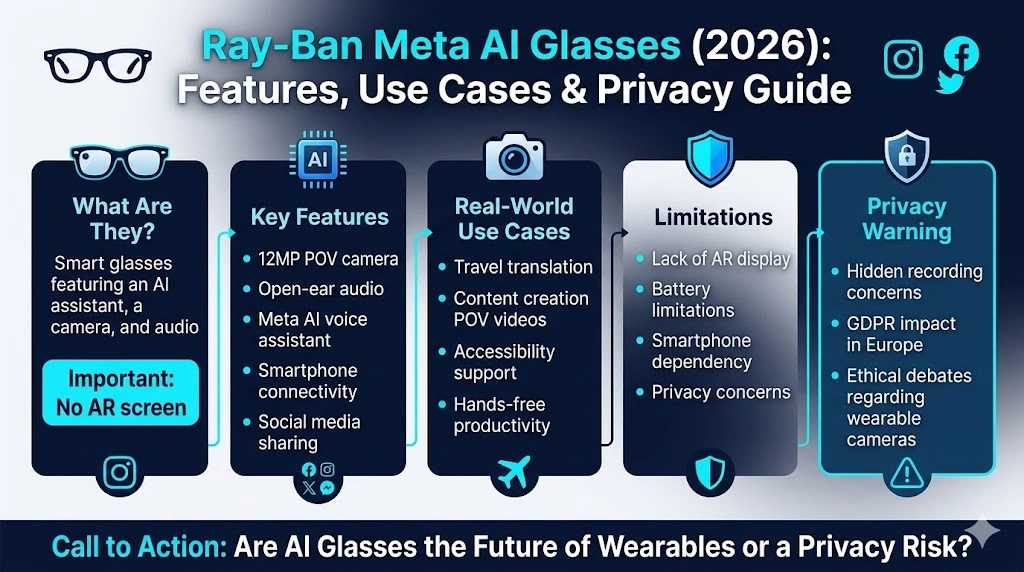

Ray-Ban Meta AI Glasses are worth considering in 2026 for hands-free photos, calls, and smart voice features—but they’re not perfect for everyone. If you’re confused about privacy, Europe support, battery life, or real daily value, this guide reveals what works, what fails, and the surprising truth before you buy these smart glasses. A clear shift toward wearable intelligence is shaping the Technology landscape in 2026. People no longer want digital tools that live only on a phone screen or desktop monitor. They want devices that are immediate, intuitive, discreet, and useful in real time. That is exactly why the Ray-Ban Meta AI Glasses have become one of the most discussed consumer tech products of the year.

These smart glasses sit at the intersection of fashion, convenience, and machine intelligence. They look like recognizable Ray-Ban eyewear, but inside the frame is a compact system designed for voice interaction, photo capture, video recording, open-ear audio, and AI-powered assistance. This makes them more than just a gadget. They are a statement about where personal computing is heading.

Ray-Ban Meta AI Glasses Review — Are They Actually Worth Buying?

For many users, the appeal is simple. The glasses let you interact with artificial intelligence without always pulling out a phone. You can ask questions, capture a moment, listen to audio, or share content with less friction. For travelers, creators, and everyday users, that is a powerful advantage. For privacy advocates, regulators, and cautious consumers, however, the same features raise serious questions about consent, recording transparency, and public surveillance culture.

That tension is what makes Ray-Ban Meta AI Glasses so important. They are not merely another accessory. They represent the early mainstream version of wearable AI, and they give us a preview of what hands-free computing might look like in the years ahead.

This article breaks down the product in detail. You will see what it is, how it works, which features matter most, where it helps in real life, where it falls short, and why privacy remains one of the biggest talking points. The goal is to make the topic easy to understand while still giving enough depth for readers who want a serious, search-optimized, expert-level guide.

What Are Ray-Ban Meta AI Glasses?

Ray-Ban Meta AI Glasses are smart eyewear created through a collaboration between Meta and Ray-Ban. They combine the style language of classic Ray-Ban frames with built-in digital hardware that supports camera capture, audio playback, voice interaction, and Meta AI integration.

In practical terms, they are wearable smart glasses that allow you to take photos, record short videos, make calls, listen to music or podcasts, and ask AI-powered questions using your voice. They are designed to be light, fashionable, and useful without feeling overly technical or bulky. That design choice matters because mainstream users are much more likely to wear a product that looks like ordinary eyewear than a product that looks like a lab prototype.

One of the most important clarifications is that these are not fully augmented reality glasses. They do not project holograms onto your field of vision, and they do not offer an on-lens digital interface in the way people often imagine when they hear “smart glasses.” Instead, they focus on audio, camera, and AI assistance. That distinction is critical because it shapes both the product’s strengths and its limitations.

A simple way to think about them is this: they function like a mini AI assistant, a hands-free camera, and a lightweight audio device built into stylish eyewear. That comparison helps explain why the product appeals to so many different users. It is not trying to replace every device you own. It is trying to make everyday digital actions faster, easier, and more natural.

How Ray-Ban Meta AI Glasses Work

The operating idea behind the glasses is straightforward. They connect to a smartphone and use the Meta app as the central control system. Once paired, the glasses can receive instructions, sync media, handle voice prompts, and communicate with Meta services through the phone connection.

The user experience is designed to feel conversational and minimal. You wear the glasses, speak a command, and the device responds through audio feedback or captures the requested media. This reduces the number of physical interactions required compared with using a phone camera or opening separate apps.

The workflow typically looks like this:

You wear the glasses throughout the day.

You use your voice or a touch gesture to trigger an action.

The glasses send the relevant command or data through the paired phone.

Meta AI processes the request when needed.

You hear the answer or see the result through the connected ecosystem.

That may sound simple, but simplicity is a major feature here. A lot of wearable technology fails because it adds more friction than it removes. These glasses are trying to do the opposite. They remove friction from common moments such as taking a quick photo, capturing a memory, hearing a reply, asking a question, or getting help while you are on the move.

The device is strongest when the action is brief, context-aware, and hands-free. That is the sweet spot. It works well for “capture this now,” “tell me what I am looking at,” “send this quickly,” or “play audio without headphones.” It is less compelling when you need long sessions, deep editing, or fully independent computing.

Key Features of Ray-Ban Meta AI Glasses

1. Built-in AI Camera System

One of the most defining features is the integrated camera system. The camera is designed to capture the world from the wearer’s perspective, which gives photos and videos a natural first-person point of view. That perspective has become especially popular in creator culture, travel content, and social media storytelling because it feels immersive and authentic.

The hands-free nature of the camera is a major advantage. Instead of reaching for a phone, opening the camera app, and framing a shot manually, you can capture a moment instantly. That matters in situations where timing is more important than perfection. A street performance, a market scene, a conversation, a travel view, or a spontaneous reaction can all be recorded with much less disruption.

This also makes the glasses appealing to people who want a more candid visual style. The footage often feels less staged because the camera sees what the wearer sees. For content creators who can create stronger emotional resonance, especially in lifestyle, travel, and daily vlog formats.

2. Open-Ear Audio Technology

Another core feature is the open-ear audio setup. Instead of sealing your ears with traditional earbuds, the glasses deliver sound through discreet built-in speakers positioned near the ears. This creates a more ambient, situational listening experience.

The benefit is twofold. First, you can listen to music, podcasts, or calls without blocking out the environment. Second, you stay more aware of what is happening around you. That is useful for walking in cities, moving through busy spaces, cycling, navigating transit, or simply staying alert while listening.

Open-ear audio is not only a convenience feature. It is also a safety feature in the right context. Being able to hear your surroundings while still receiving audio output makes the glasses more adaptable than many standard personal audio devices. For some users, that awareness is the main reason to choose them.

3. Meta AI Assistant Integration

The Meta AI layer is what transforms the glasses from a simple wearable camera into a smart assistant. This is the feature that gives the product its “AI” identity. Users can ask spoken questions, request assistance, and receive contextual answers without picking up a phone.

That means the glasses are useful for lightweight information tasks such as identifying something, summarizing what you see, translating text, guiding you with directions, or helping you handle a quick task while walking, traveling, or working.

The value here is immediacy. The user does not need to stop, unlock a screen, and search manually. The interaction feels more natural because it resembles a conversation. That conversational design is one reason AI wearables are often described as the next stage in human-device interaction.

In broader NLP terms, the product is interesting because it brings intent-based interaction into the physical world. Instead of forcing users to navigate menus, it responds to spoken intent. That reduces cognitive load and makes digital assistance feel more contextual.

4. Smart Charging Case

The portable charging case is another practical feature that improves everyday usability. Wearable devices live or die based on convenience, and battery management is one of the biggest hurdles in this category. A compact charging case gives the glasses a more travel-friendly profile and helps extend usage during the day.

The case is important because it supports the kind of movement-heavy lifestyle these glasses are designed for. Whether you are commuting, sightseeing, recording clips, or moving between meetings, a convenient charging system helps reduce downtime. For many users, that is what makes the device feel like a real accessory rather than a fragile tech experiment.

5. Smartphone Connectivity

The glasses do not operate in a vacuum. They are part of a larger mobile ecosystem, and that ecosystem is where many of the practical benefits show up. Through smartphone pairing and Meta app integration, users can share content, sync media, access settings, and connect the glasses to familiar social tools.

This connection matters because it allows a smoother path from capture to sharing. A photo or video can move from the glasses to the phone and into a social channel with fewer steps than a typical camera workflow. For users who already live inside Meta’s ecosystem, this can be especially efficient.

The real advantage is continuity. The glasses do not replace your phone. They extend it. That makes the product easier to adopt for people who want an add-on experience rather than an all-in-one replacement.

Real-World Use Cases of Ray-Ban Meta AI Glasses

Travel and Exploration

Travel is one of the strongest use cases for Ray-Ban Meta AI Glasses. When you are in a new city, your attention is constantly shifting between navigation, landmarks, signs, conversations, and sensory overload. A hands-free smart device fits that environment well.

The glasses can support translation, on-the-go photography, quick information lookup, and POV travel documentation. Instead of stopping every few minutes to use a phone, you can keep moving and still capture what matters. That creates a smoother travel experience and makes the device especially attractive to tourists, backpackers, city explorers, and international visitors.

In a travel context, the glasses feel useful because they reduce interruptions. They do not require the user to pause the moment. They help the moment continue.

Content Creation and Social Media

For creators, the product is easy to understand. It offers a convenient way to capture first-person footage with minimal setup. That makes it useful for lifestyle videos, street scenes, behind-the-scenes clips, tutorials, reaction content, and travel storytelling.

The creator advantage is authenticity. A handheld phone can feel more staged. A chest mount can feel awkward. A traditional camera can feel intrusive. Smart glasses strike a middle ground by letting the creator record what they see in a more fluid, less noticeable way.

This is especially appealing for short-form content. Social platforms reward immediacy, movement, and personality. Ray-Ban Meta AI Glasses support that style by making capture feel natural rather than technical. That can improve consistency for creators who want to document life without always interrupting it.

Accessibility Support

Wearable AI also has meaningful accessibility potential. For some users, smart glasses can provide valuable assistance with reading text, describing surroundings, or offering spoken guidance. That can be helpful in everyday tasks that would otherwise require repeated phone interaction.

Accessibility is one of the most important long-term arguments for this type of product. When technology becomes more conversational and more ambient, it can reduce barriers for users who may struggle with small screens, manual input, or constant device handling.

That does not mean the product solves every accessibility need. It does mean the category has clear promise, especially when AI can provide situational help in real time.

Productivity and Everyday Work

The glasses also fit certain productivity scenarios. They can be useful for voice notes, quick reminders, walking meetings, brief information checks, and fast capture of ideas. Their value is not in replacing a laptop or tablet. Their value is in helping the user stay in motion while still staying connected.

This is the kind of productivity that matters outside an office desk. A person can be commuting, moving between rooms, or handling a routine errand and still remain somewhat plugged into digital life. That flexibility makes the glasses more than just a novelty for some users. They become an everyday companion device.

What Ray-Ban Meta AI Glasses Do Well

The strongest argument in favor of these glasses is that they reduce friction. Modern digital life is often full of tiny interruptions: unlock the phone, open the app, switch windows, press record, confirm the action, then send or save the result. The glasses compress that process into something more natural.

They also succeed because they are wearable in a normal social setting. Many smart devices fail because they make users look unusual. These glasses avoid that problem by leaning on a trusted fashion form factor. That makes them easier to wear in public, which in turn makes them more useful.

Another strength is that they focus on practical capabilities instead of trying to do everything. The product is not overloaded with unnecessary complexity. It does a few major things well enough to matter: capture, listen, ask, and share. That simplicity helps adoption.

From an NLP and UX perspective, the product is built around low-effort interactions. The user expresses intent in natural language, and the device responds in kind. This conversational pattern is one of the clearest signals of how consumer technology is evolving.

Limitations of Ray-Ban Meta AI Glasses

Despite the appeal, the glasses have real limitations. The most obvious is the lack of a true AR display. For some consumers, that is a dealbreaker because they expect smart glasses to behave more like a visual computing platform. These glasses do not do that. They are not meant to overlay digital objects on the real world.

Another limitation is dependence on the smartphone ecosystem. While the glasses feel hands-free, they are still tied to mobile connectivity and companion software. That means they are not fully independent devices. They rely on the broader phone-and-app infrastructure to deliver their best features.

Battery life is another practical concern. Wearable devices often face a tradeoff between compact design and long usage time. When the camera, audio, AI, and connectivity features are active, battery performance can become a limiting factor, especially for heavy users.

There are also recording constraints. The device is best suited to short clips and quick captures rather than long-form production. That makes sense for the product category, but it still means users need to understand what the glasses are and are not built to do.

Finally, AI is not perfect. The assistant can make mistakes, misunderstand context, or provide imperfect answers. That is not unique to this product, but it matters because users may expect the glasses to feel more magical than they really are. Like any AI tool, the output is helpful but not infallible.

Privacy and Ethical Concerns:

If there is one topic that defines public discussion about Ray-Ban Meta AI Glasses, it is privacy. The conversation goes far beyond technical features. It touches on social norms, consent, public behavior, and the future of surveillance.

Hidden Recording Risk

The most immediate concern is that smart glasses can make recording less obvious. A phone camera is visible. A wearable camera can be more discreet. That creates unease for people nearby, because they may not know when a recording is happening or whether their image or voice is being captured.

That concern is not merely hypothetical. Public comfort matters. If wearable cameras become normalized without clear social standards, people may feel more exposed in everyday settings.

Always-On Environmental Awareness

Another concern is ambient capture. When a device is always present on your face, it can seem as though the environment is continuously being observed, analyzed, or interpreted. Even if the system is not recording constantly, the perception alone can raise an alarm.

This is where AI wearables become ethically sensitive. The more capable they become, the more they resemble a persistent sensing layer rather than a simple accessory.

Data Transparency and Control

Many users also worry about where their data goes, how it is stored, what metadata is collected, and how much they truly understand about the processing pipeline. These are important questions because AI products often rely on broad data ecosystems and complex platform policies.

For a device worn on the face, trust is especially important. People need clear rules, clear indicators, and clear controls. Without those, the product risks being seen not as a helpful wearable, but as a new form of surveillance hardware.

The Broader Ethical Debate

Supporters argue that smart Glasses can be beneficial in accessibility, travel, emergency help, and hands-free productivity. Critics argue that they normalize behavior that undermines public privacy and may encourage a constant recording culture.

Both views have merit. The product is useful, but usefulness does not erase ethical responsibility. The challenge for this category is to make the technology more transparent, more respectful, and more socially acceptable as adoption grows.

Europe Guide: What Changes in European Markets?

Europe is an especially important region for smart glasses because privacy expectations are generally higher and data rules are stricter. That affects both product adoption and public reception.

GDPR and Consent Expectations

The General Data Protection Regulation has shaped how companies think about personal data, recording, and user transparency. In the context of wearable smart glasses, GDPR concerns can affect how data is collected, stored, processed, and shared. This is one reason Europe tends to take a more cautious approach to devices that may record people in public spaces.

Consent matters more strongly in this environment. Users are expected to think carefully about recording others, especially in workplaces, private venues, and sensitive environments.

Cultural Sensitivity to Recording Devices

Europe also has a strong cultural awareness around privacy, surveillance, and public recording. That does not mean the product has no audience there. It does mean adoption may be more selective and deliberate than in markets that move faster on consumer tech experimentation.

The result is a slower but still meaningful path to adoption. Tech enthusiasts, creators, and early adopters may embrace the product first, while mainstream users may wait until privacy concerns are clearer and public norms become more settled.

Availability and Market Variation

Pricing and availability can vary by country, retailer, frame choice, and promotional period. European buyers often compare not just the device itself but also the total cost of ownership, local support, app compatibility, and any country-specific rules that affect usage.

This is why Europe deserves a separate guide in any serious article about the glasses. The device may be global in branding, but the user experience is not identical everywhere.

Ray-Ban Meta AI Glasses vs Alternatives

To understand the product properly, it helps to compare it with adjacent categories.

Versus Smartphone AI

The smartphone still wins on raw capability, screen size, battery power, and app depth. It is more versatile, more established, and better for long-form tasks. But the glasses win on convenience, speed, and hands-free interaction.

That is the key tradeoff. The phone is deeper. The glasses are faster.

Versus Future AR Glasses

Future AR glasses may offer visual overlays, richer spatial interaction, and more advanced digital experiences. If that vision becomes mainstream, then Ray-Ban Meta AI Glasses may look like an early stepping stone rather than the final destination.

Still, stepping stones matter. This product is valuable because it trains users to accept voice-driven wearables, camera-equipped eyewear, and AI assistance in daily life. That makes it an important bridge product.

Versus Traditional Audio Wearables

Compared with standard earbuds or headphones, these glasses offer a more integrated experience. You get audio, camera, and AI in one object instead of multiple separate devices. That can reduce clutter and make daily carry simpler.

The tradeoff is specialization. Earbuds are usually better for pure audio. Smart glasses are better when audio is only one part of a broader use case.

Who Should Buy Ray-Ban Meta AI Glasses?

This product is best for people who value convenience, style, and hands-free interaction. It is especially strong for:

Travelers who want fast capture and AI help on the move.

Content creators who want natural POV footage.

Tech enthusiasts who enjoy early wearable platforms.

Users who want quick voice-driven assistance without opening a phone.

People who like open-ear audio and want to stay aware of their surroundings.

The glasses are less ideal for users who expect a full AR experience, need long battery life, or want a device that can completely replace the smartphone. They are also not the right choice for people who are highly uncomfortable with camera wearables or who prefer minimal data exposure.

Pricing of Ray-Ban Meta AI Glasses in Europe

Pricing varies depending on frame style, lens type, edition, and retailer. In Europe, buyers typically see different price points across standard versions, upgraded frame designs, and special editions. A realistic way to think about the market is that the glasses sit in the premium wearable category rather than the budget gadget category.

That matters because the purchase decision is not just about features. It is also about expectations. A user paying a premium will expect comfort, durability, good app integration, reliable capture, and a design that feels worth wearing every day.

Because country-level pricing can shift, buyers in Germany, the UK, France, Italy, the Netherlands, and other European markets often compare local offers before choosing a model. Taxes, currency differences, and availability can all affect the final decision.

Pros and Cons

Advantages

The glasses are stylish enough for daily wear, which is a major advantage in a category where design often decides adoption. They also offer fast access to AI, hands-free media capture, open-ear listening, and social sharing convenience. For creators and travelers, those benefits can translate into genuine day-to-day value.

Another advantage is simplicity. The product does not try to overwhelm the user. It does a small number of things that matter, and it does them in a way that feels more natural than using a phone for everything.

Disadvantages

The lack of an AR screen limits the product’s ambition. Battery constraints remain real. Privacy concerns are not minor. Smartphone dependence is still a factor. And AI output is still imperfect.

In other words, the glasses are impressive, but they are not a final form of wearable computing. They are an early, useful, and fashionable step in that direction.

How to Use Ray-Ban Meta AI Glasses

Using the glasses is relatively straightforward. First, the user sets up the companion app and pairs the device with a smartphone. After that, the glasses can be configured for photo capture, video, audio playback, and AI interactions.

The best experience comes from learning the main voice commands and understanding how the device fits into everyday routines. Short, practical interactions work best. For example, asking for help while walking, capturing a quick memory, or listening to audio while staying aware of the environment.

A few simple habits improve the experience. Keeping the software updated helps with performance and stability. Charging the case regularly helps prevent interruptions. Using the glasses in short bursts prevents battery frustration. And being mindful of recording etiquette helps avoid social awkwardness or privacy concerns.

Europe Adoption Trends

A combination of fashion appeal, creator culture, tech enthusiasm, and privacy sensitivity shapes adoption across Europe. Cities with strong innovation scenes often show greater curiosity around wearable AI. Creators and early adopters are usually the first to test the device in public life.

At the same time, adoption is slowed by questions about consent, surveillance, and data handling. This means Europe is likely to remain a thoughtful market rather than a rushed one. People may be interested, but they will also ask hard questions before they buy.

That slow-and-steady adoption pattern may actually benefit the category in the long run. Products like this often improve when public expectations force better transparency and better design.

Use Case Effectiveness

The strongest use cases are travel and content creation because those environments benefit the most from hands-free capture and quick AI assistance. Productivity is also promising, especially for brief tasks and mobility-based work.

The weaker use cases are gaming and advanced AR tasks, because that is not what the glasses were designed for. Anyone expecting immersive mixed reality will likely feel underwhelmed. Anyone wanting a stylish, useful, voice-driven wearable support will probably find them much more compelling.

Future of Ray-Ban Meta AI Glasses

The future of this category is likely to bring better battery efficiency, smarter AI models, stronger translation tools, and more mature hardware. Over time, we may also see more serious competition from major platform players, which will push the category forward faster.

There is also the possibility that future versions will move closer to true AR capability. If that happens, today’s glasses may be remembered as an important early bridge between fashion eyewear and spatial computing.

For now, the product is best seen as a strong foundation rather than a finished destination. It shows what happens when wearable form, AI interaction, and practical design come together in one consumer-friendly package.

FAQs

They are used for hands-free photos, videos, AI assistance, and music playback.

No, they do not have any display or AR screen.

No, they are companion devices only.

They include indicators, but privacy concerns still exist.

Yes, for content creators and travelers. Not ideal for AR users.

Conclusion

Ray-Ban Meta AI Glasses in 2026 stand out because they make wearable AI feel practical instead of futuristic for the sake of novelty. They are stylish, lightweight, and capable of useful real-world actions such as capturing moments, listening hands-free, and asking for AI assistance without constantly reaching for a phone.

Their strongest appeal lies in everyday convenience. They help travelers move faster, creators capture more naturally, and users interact with digital tools in a less interruptive way. They also represent a major step in the evolution of consumer AI wearables, where the interface becomes more conversational and more ambient.

At the same time, the product is not without tradeoffs. There is no AR display, battery life is still a constraint, smartphone dependence remains, and privacy concerns are serious enough to shape public perception. In Europe, especially, that privacy dimension matters more because of stronger regulatory expectations and cultural sensitivity around recording and surveillance.

The final verdict is balanced. Ray-Ban Meta AI Glasses are not a full replacement for a smartphone, and they are not a complete AR future device. They are, however, one of the most compelling early wearable AI products on the market. For the right buyer, they are useful, stylish, and forward-looking. For the wrong buyer, they may feel limited. That contrast is exactly what makes them such an important product to understand in 2026.