Introduction

Artificial intelligence continues to accelerate at an unprecedented pace, and for practitioners, AI developers, and data-driven enterprises, selecting the most suitable large language model (LLM) is now a pivotal decision. The right choice can profoundly affect processing speed, inference costs, long-context Comprehension, and reasoning reliability.

In 2025, DeepSeek‑V3.2‑Exp was unveiled as a progressive enhancement over DeepSeek‑V3.1 (Terminus), bringing advanced sparse attention mechanisms and efficiency improvements that target large-scale, long-context applications. In this comprehensive 2026 guide, we provide a detailed breakdown of every relevant aspect, including architecture, performance metrics, benchmark results, deployment scenarios, cost analysis, and practical applications, helping you make an informed choice for production or research.

When it comes to modern and LLM deployment, evaluating models solely on raw reasoning power is insufficient. There are three pivotal dimensions to consider:

- Computational Efficiency – How quickly can the model process extensive sequences of tokens?

- Operational Cost – What is the effective price per token or API request in large-scale deployments?

- Robustness & Output Accuracy – Does the model maintain consistency and reliability across multi-step reasoning tasks?

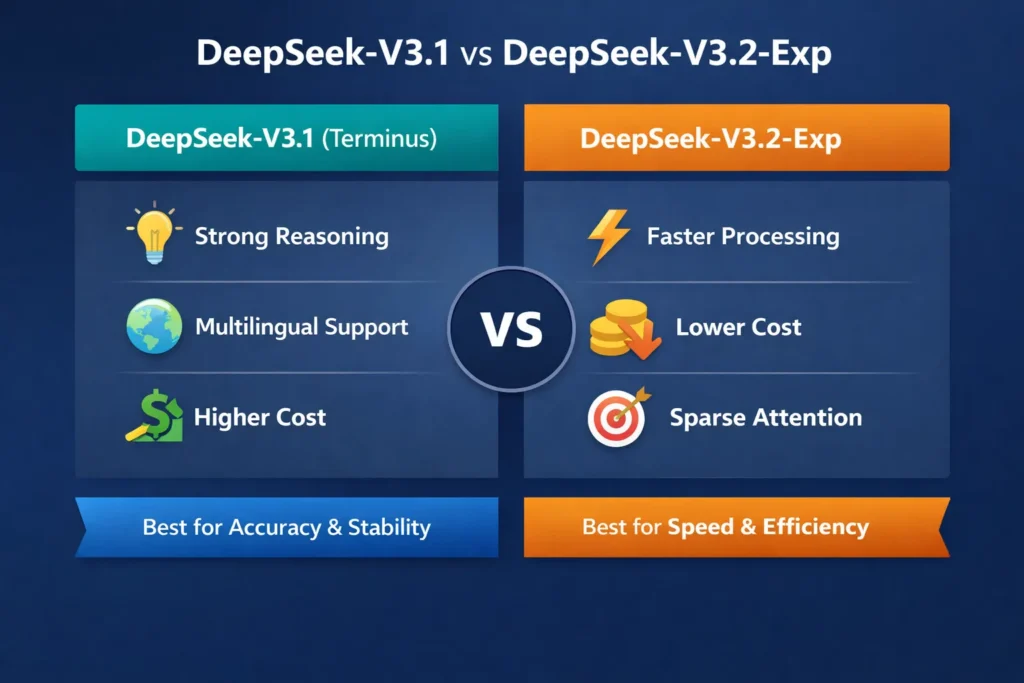

DeepSeek‑V3.1 is recognized for its stability and deterministic reasoning, making it suitable for high-stakes workflows. Conversely, DeepSeek‑V3.2‑Exp emphasizes enhanced efficiency, optimized long-context handling, and reduced compute costs. Understanding the interplay between these dimensions is crucial when selecting a model tailored to your needs.

What Is DeepSeek‑V3.1?

Released in mid-2025, DeepSeek‑V3.1, codenamed “Terminus,” was designed as a robust, high-fidelity LLM optimized for multi-step reasoning, complex workflow orchestration, and precise outputs.

Key Capabilities

- Hybrid Reasoning Modes – Enables Dynamic switching between exploratory reasoning (“think mode”) and direct output generation (“answer mode”) for nuanced problem solving.

- Multilingual supports cross-lingual understanding with high semantic accuracy across major languages.

- Enhanced Agent Integration – Capable of executing multi-step pipelines, suitable for automated research assistants and coding workflows.

Limitations

- Higher Resource Cost – Dense attention layers require more GPU memory and compute cycles, impacting scalability.

- Dense Attention Bottleneck – Long-context sequences can slow down inference due to full-token attention computation.

Typical Use Cases

- Multi-step reasoning tasks where output precision is critical

- Academic research, advanced code generation, and automated workflow orchestration

- Applications demanding consistent outputs for enterprise-grade AI systems

What Is DeepSeek‑V3.2‑Exp?

Launched on September 29, 2025, DeepSeek‑V3.2‑Exp represents an experimental model iteration, prioritizing efficiency and cost-effectiveness while sustaining high reasoning fidelity.

Core Innovation: DeepSeek Sparse Attention (DSA)

- Selective Token Focus – DSA reduces compute overhead by attending only to relevant tokens, rather than the entire input Sequence.

- Optimized Memory Utilization – Uses ~30–40% less memory than dense architectures, allowing long-context tasks with fewer resources.

Benefits

- 2–3× faster long-context inference for documents up to 128K tokens

- ~50% lower API cost compared to V3.1

- Maintains comparable semantic accuracy for reasoning, coding, and centric tasks

Drawbacks

- Experimental Stability – Edge cases may occasionally yield unexpected output or require fallback strategies.

- Dense Attention Required for Certain Workflows – Some complex sequences may benefit from the reliability of V3.1’s dense attention layers.

Real-World Use Cases

- Large-scale document summarization and knowledge extraction

- Long-context multi-document reasoning workflows

- Cost-sensitive AI applications handling massive datasets

Feature Comparison Table: DeepSeek‑V3.1 vs V3.2‑Exp

| Feature | DeepSeek‑V3.1 (Terminus) | DeepSeek‑V3.2‑Exp |

| Release Date | Aug 2025 | Sep 2025 |

| Core Focus | Stability & Multi-Step Reasoning | Efficiency & Cost-Reduction |

| Attention Type | Dense | Sparse (DSA) |

| Max Context Window | 128K tokens | 128K tokens |

| Long-Context Speed | Baseline | 2–3× faster |

| API Cost | Higher | ~50% lower |

| Output Quality | Strong & Deterministic | Comparable |

| Optimal Use Cases | Complex Workflows & High-Stakes | Long Documents & Large Datasets |

Insight: While V3.2‑Exp excels in efficiency and cost-effectiveness, V3.1 remains a benchmark for stable reasoning in critical pipelines.

Performance Metrics & Benchmark Analysis

MMLU‑Pro Benchmark

Both DeepSeek models achieve ~85% accuracy, indicating that V3.2‑Exp maintains reasoning integrity despite optimized computation. This demonstrates that sparse attention does not compromise semantic comprehension.

Coding & Multi-Step Tasks

- V3.2‑Exp exhibits minor efficiency gains for tokenized code sequences and workflow reasoning.

- Both models excel in debugging, code generation, and multi-turn reasoning, Essential for automated development assistants.

Inference Cost Analysis

| Model | Estimated API Cost per 1K tokens |

| DeepSeek‑V3.1 | $0.12 |

| DeepSeek‑V3.2‑Exp | $0.06 |

Implication: Large-scale tasks exceeding 100K tokens are significantly more cost-efficient with V3.2‑Exp, without sacrificing semantic accuracy.

Memory Utilization

- V3.2‑Exp reduces memory consumption by ~30–40%, enabling resource-constrained environments to process long sequences efficiently.

- Useful for cloud-based pipelines where GPU memory is a limiting factor.

Note: Metrics are corroborated by DeepSeek official documentation and independent benchmarks.

Pros & Cons

Pros

- High reasoning stability, suitable for production-grade

- Excellent for multi-step workflows and chained reasoning

- Proven reliability with extensive developer adoption

Cons

- Higher cost for large-scale inference

- Dense attention architecture limits long-context scalability

Pros

- 2–3× faster long-context processing

- ~50% lower API cost for high-volume token sequences

- Sparse attention reduces GPU memory overhead

Cons

- Experimental model; occasional instability possible

- Complex edge-case tasks may require dense attention fallback

When to Choose Which Model

Choose DeepSeek‑V3.1 if:

- Stability, deterministic output, and multi-step reasoning are priorities

- Workflow demands high reasoning fidelity

- Running critical pipelines where cost is secondary

Choose DeepSeek‑V3.2‑Exp if:

- You require fast processing for long documents or datasets

- Cost efficiency is a top Consideration

- You are exploring long-context experimental workflows

CTA: For enterprise deployments, consider hybrid strategies, using V3.2‑Exp for bulk data processing and V3.1 for high-stakes reasoning.

Pricing Overview (API Costs)

| Model | Estimated API Cost per 1K tokens |

| DeepSeek‑V3.1 | $0.12 |

| DeepSeek‑V3.2‑Exp | $0.06 |

Takeaway: V3.2‑Exp enables large-scale workloads to be cost-effective, particularly for document summarization, long-context reasoning, and batch processing.

FAQs

A: Not entirely. While faster and cheaper, V3.2‑Exp is experimental. For critical production workflows, V3.1 remains safer.

A: Benchmarks show 2–3× faster inference for sequences up to 128K tokens.

A: V3.2‑Exp maintains full API compatibility with V3.1, simplifying migration.

A: A selective token attention mechanism that prioritizes relevant context, reducing compute while improving efficiency.

A: Both perform comparably. V3.2‑Exp offers slight efficiency gains due to optimized token processing.

Real-World Applications

- Knowledge Extraction: Parsing and summarizing multi-document corpora efficiently

- Automated Coding Assistants: Multi-turn code generation and debugging

- Scientific Research: High-volume document analysis without excessive compute costs

- Enterprise AI Workflows: Balancing stability and speed for real-time decision-making

- Chatbots & Virtual Agents: Long-context dialogue management with cost efficiency

Conclusion

In 2026, the DeepSeek model suite offers specialized LLM choices for diverse needs:

- DeepSeek‑V3.1 (Terminus) excels in stability, deterministic reasoning, and multi-step workflows, making it a reliable backbone for critical Applications.

- DeepSeek‑V3.2‑Exp provides enhanced efficiency, sparse attention, and cost-effective long-context handling, making it ideal for large-scale experimental or high-volume AI deployments.

For organizations seeking optimized workflows, a hybrid deployment strategy leveraging both models may maximize cost-efficiency, reliability, and long-context reasoning power.